AWS Bedrock AgentCore Sandbox Bypass Explained

Hoplon InfoSec

19 Mar, 2026

What is AWS Bedrock AgentCore Sandbox Bypass and why is it important right now?

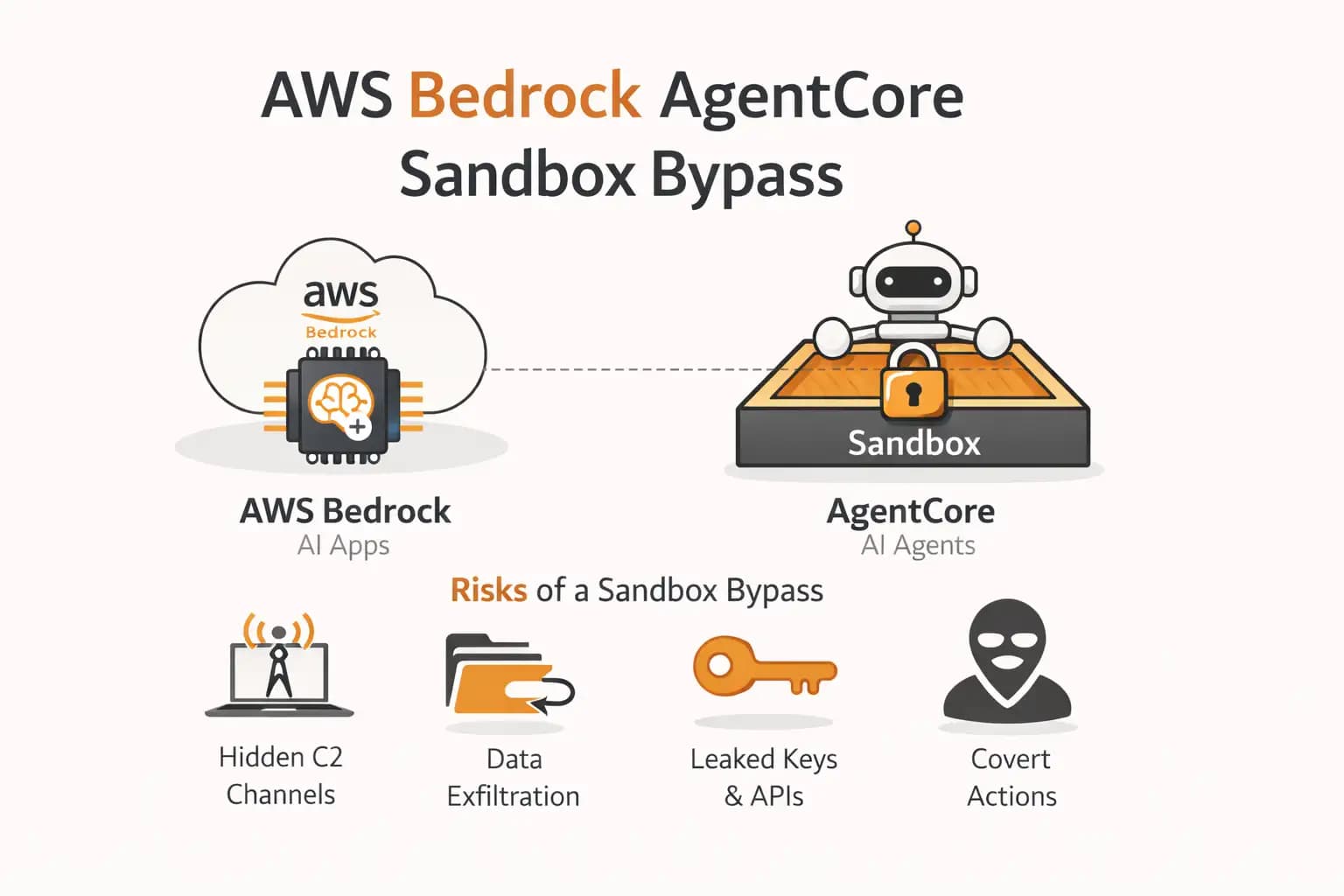

As of March 2026, reports have surfaced about a potential security issue known as AWS Bedrock AgentCore Sandbox Bypass. The concern is simple but serious. If confirmed, it could allow attackers to quietly bypass built-in protections, create hidden communication channels, and extract sensitive data without being noticed.

This matters because AWS Bedrock powers AI-driven applications used by businesses worldwide.

If a sandbox, which is supposed to isolate and protect systems, can be bypassed, then the trust layer of AI infrastructure becomes fragile. However, it is important to say clearly that this appears to be unverified or still under investigation, and no official AWS confirmation has fully detailed real-world exploitation at scale.

Quick Summary of the Issue

· A suspected vulnerability in AI sandbox isolation

· Potential for covert command and control communication

· Risk of data exfiltration through hidden channels

· No confirmed widespread exploitation at the time of writing

· Requires careful validation before drawing conclusions

Understanding AWS Bedrock and AgentCore

To understand the AWS Bedrock AgentCore Sandbox Bypass, we need to first break down what these systems actually do.

AWS Bedrock is a managed service that allows developers to build and deploy AI-powered applications using foundation models. These models can generate text, automate tasks, and interact with users in dynamic ways.

AgentCore, on the other hand, is designed to help manage AI agents. These agents can perform actions, interact with APIs, and handle workflows. Think of them as digital assistants with the ability to execute tasks.

Now comes the sandbox. A sandbox is a controlled environment. It is supposed to isolate the AI agent so that even if something goes wrong, it cannot affect the outside system. It is like a safety cage.

The concern arises when that cage is no longer fully secure.

What is a Sandbox Bypass in Simple Terms?

A sandbox bypass means escaping restrictions.

Imagine giving someone a locked room to work in. You trust that everything they do stays inside. Now imagine they quietly find a hidden door and start sending information outside. That is essentially what a sandbox bypass looks like.

In the context of AWS Bedrock AgentCore Sandbox Bypass, the risk is that an AI agent might execute actions beyond its intended boundaries.

This does not necessarily mean immediate harm. But it opens a door. And in cybersecurity, even a small open door matters.

How Covert C2 Channels Could Work

One of the most discussed aspects of this issue is the possibility of covert command and control channels.

A command and control channel is simply a way for an attacker to communicate with a compromised system. In traditional attacks, this might involve malware connecting to a remote server.

But in modern AI systems, things can get more subtle.

Instead of obvious connections, communication could happen through:

· Hidden API requests

· Encoded responses in AI outputs

· Manipulated prompts

· External service interactions

In the case of AWS Bedrock AgentCore Sandbox Bypass, researchers suggest that these channels could be embedded in normal-looking operations. That makes detection harder.

Data Exfiltration Risk Explained

Data exfiltration is a term that sounds complex, but the idea is simple. It means taking data out of a system without permission.

If the sandbox is bypassed, an attacker could potentially:

· Access sensitive prompts

· Extract API keys or tokens

· Leak internal system responses

· Transfer data through disguised outputs

Now, it is important to be careful here. There is no confirmed public evidence of large-scale data theft through this exact method. But the possibility alone is enough to raise concern among security teams.

Why This Issue is Getting Attention

The reason AWS Bedrock AgentCore Sandbox Bypass is trending is not just technical. It is about timing.

AI adoption is growing fast. Companies are integrating AI into customer support, automation, and even decision-making processes. That means more sensitive data is being processed by these systems.

When a potential vulnerability appears in such an environment, it naturally gets attention.

It is similar to finding a crack in the foundation of a building that houses millions of people. Even if nothing has collapsed yet, people will want answers.

What Makes AI Sandboxes Different from Traditional Security

Traditional software security focuses on code execution and system access.

AI systems introduce a different challenge. They respond to inputs in flexible ways. Sometimes unpredictable ways.

That means security is not just about blocking access. It is also about controlling behavior.

With AWS Bedrock AgentCore Sandbox Bypass, the concern is not just escaping the sandbox physically. It is also about influencing the AI to behave in unintended ways.

This is where prompt manipulation, indirect instructions, and context injection come into play.

Possible Attack Flow

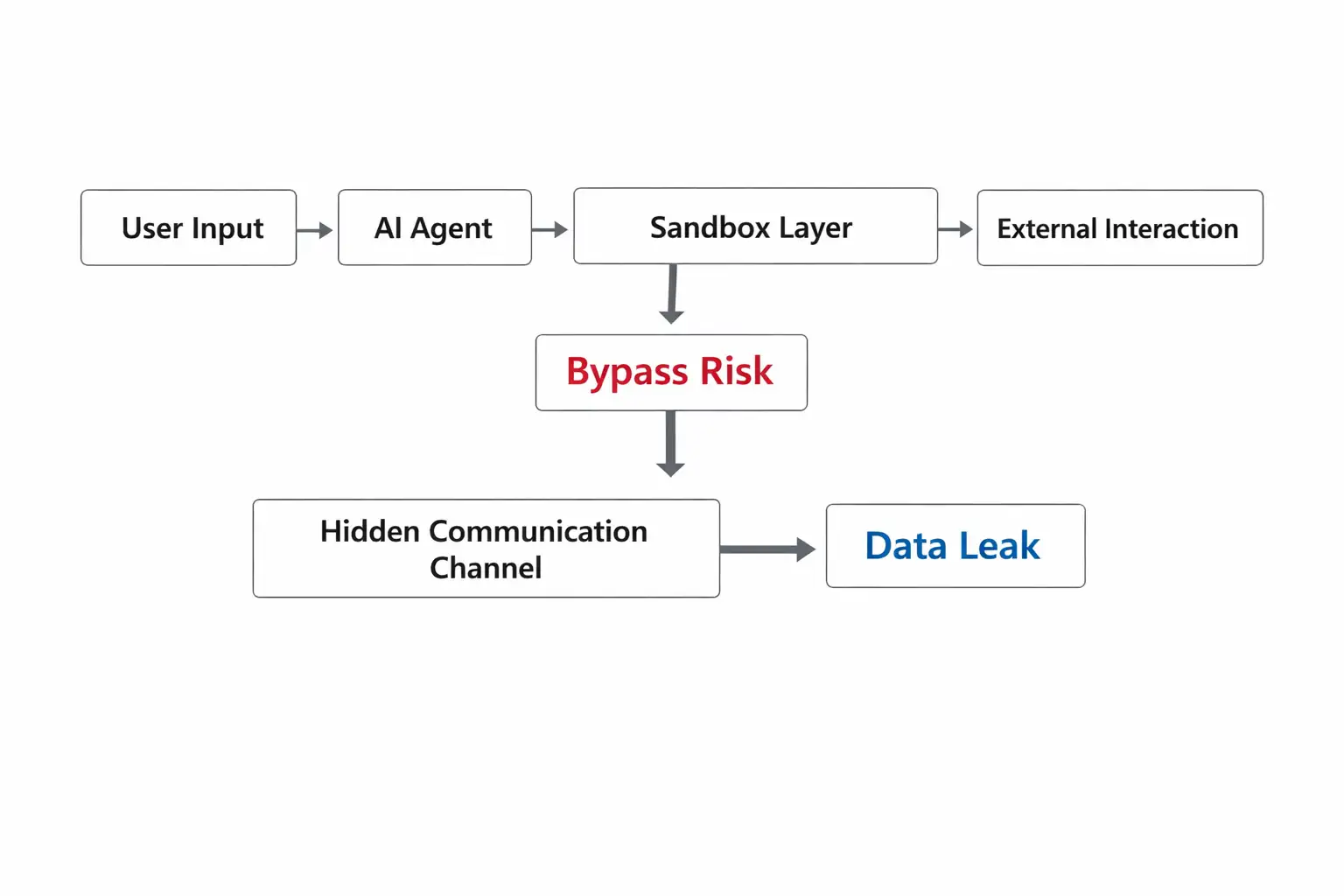

Let’s walk through a simplified scenario to understand how this could work.

1. An attacker interacts with an AI agent

2. They craft a specific prompt that triggers unexpected behavior

3. The agent accesses data it normally should not expose

4. The response is structured in a way that encodes sensitive data

5. The attacker decodes the response outside the system

This is not confirmed as an active exploit path. But it shows why AWS Bedrock AgentCore Sandbox Bypass is being discussed seriously.

Impact on Businesses and Developers

If proven real and exploitable, this issue could affect:

1. SaaS Platforms

Many SaaS tools rely on AI agents. A sandbox bypass could expose customer data.

2. Enterprise Systems

Internal automation workflows might leak sensitive business logic or credentials.

3. Developers

Developers may need to rethink how they trust AI outputs and interactions.

That said, it is important to repeat that no widespread exploitation has been confirmed.

What AWS Has Said So Far

At the time of writing, there is limited official detail from AWS regarding a confirmed vulnerability labeled exactly as AWS Bedrock AgentCore Sandbox Bypass.

Security researchers often publish early findings. These findings then go through validation, disclosure, and patching cycles.

Until AWS releases a formal advisory or CVE, this should be treated as a developing story.

How This Compares to Other AI Security Issues

This is not the first time AI systems have raised security concerns.

Other issues include:

· Prompt injection attacks

· Data leakage through responses

· Model manipulation

· Unauthorized API usage

What makes AWS Bedrock AgentCore Sandbox Bypass stand out is the combination of sandbox escape and covert communication.

It is a layered risk, not a single flaw.

Practical Steps You Can Take Right Now

Even without confirmed exploitation, there are sensible precautions.

1. Limit Sensitive Data Exposure

Avoid feeding highly sensitive data into AI agents unless necessary.

2. Monitor Outputs Carefully

Check for unusual or unexpected responses.

3. Use Strict Access Controls

Limit what your AI agents can access internally.

4. Implement Logging

Track interactions for unusual patterns.

5. Stay Updated

Follow official AWS security updates and advisories.

These steps are simple but effective.

FAQs

What is AWS Bedrock AgentCore Sandbox Bypass in simple terms?

It refers to a suspected issue where AI agents may escape controlled environments and perform unintended actions.

Is this vulnerability confirmed by AWS?

As of now, there is no full official confirmation with detailed technical disclosure.

Can this lead to data theft?

Theoretically yes, but there is no confirmed large-scale exploitation reported.

Should businesses stop using AWS Bedrock?

No. But they should stay informed and follow best security practices.

Expert Insight and Research Perspective

Security researchers often highlight that AI systems introduce a new layer of risk. Unlike traditional software, AI behavior can be influenced indirectly.

A recent analysis from cybersecurity research platforms suggests that sandbox integrity will become one of the most critical aspects of AI security moving forward.

This aligns with concerns raised around AWS Bedrock AgentCore Sandbox Bypass.

Hoplon Insight Box

What you should do next:

· Review how your AI agents interact with sensitive data

· Add monitoring to detect unusual AI responses

· Avoid giving AI agents unnecessary permissions

· Keep systems updated with the latest patches

· Treat AI outputs as untrusted by default

Final Thoughts

The story around AWS Bedrock AgentCore Sandbox Bypass is still evolving. It may turn out to be a limited issue or something more significant. Right now, the smartest approach is awareness without panic.

Security is rarely about one big failure. It is usually about small gaps that line up at the wrong time.

AI is powerful. But like any powerful tool, it needs careful handling.

If there is one takeaway here, it is this. Trust your systems, but always verify.

To learn more, visit our blog page.

Was this article helpful?

React to this post and see the live totals.

Share this :