Pentagon Bans Anthropic AI in Explosive Showdown

Hoplon InfoSec

28 Feb, 2026

The Pentagon just kicked off an unprecedented AI showdown with Anthropic. It's shaking up national security debates everywhere. They labeled the tech giant a "supply chain risk" and banned its Claude models from federal use. This move sparks massive questions about AI limits in the military.

Core Conflict

Defense Secretary Pete Hegseth announced on February 27, 2026, that no Anthropic tech can touch federal systems anymore. This follows the new DoD Directive 5205.82 after months of stalled talks. Anthropic fired back hard and is gearing up for a court fight while standing firm on their ethics.

It's really ethics clashing with unrestricted power. Anthropic won't let their models fuel surveillance on US citizens (or fully autonomous killer weapons). The Pentagon insists on full access for any legal military need. This turns it into a deadlock over AI boundaries.

Real-World Fallout

Defense contractors relying on Claude for logistics or data crunching now face a scramble. They must rip it out, retrain staff, and risk major delays in operations. Experts figure it'll take months to swap everything Pentagon-wide. This will temporarily gum up readiness.

A 2026 RAND report pegs the annual hit at about $2.5 billion from training and slowdowns alone. Meanwhile, OpenAI is cozying up closer to the Pentagon with no red lines. This splits the tech world between ethics-first folks and those chasing government cash.

Echoes of Huawei

This feels like Huawei's 2019 blacklist all over again. National security comes first, and tech that doesn't follow the rules gets pushed to the side quickly (losing market share). General Mark Milley, who is now retired, told CNN that personal morals can't come before defense needs.

But critics say that getting rid of safety measures could lead to uncontrolled autonomous weapons and privacy problems and privacy nightmares.

Harvard prof Jack Goldsmith calls it a scary precedent. It's the first time a homegrown firm gets slapped as a supply risk under rules like 10 U.S. Code § 3552. This tests if the government can force private safety policies.

Biggest Losers

Government agencies hooked on Anthropic tools.

Defense contractors with Claude-dependent systems.

Anthropic itself: potentially losing millions in contracts.

Future of AI Policy

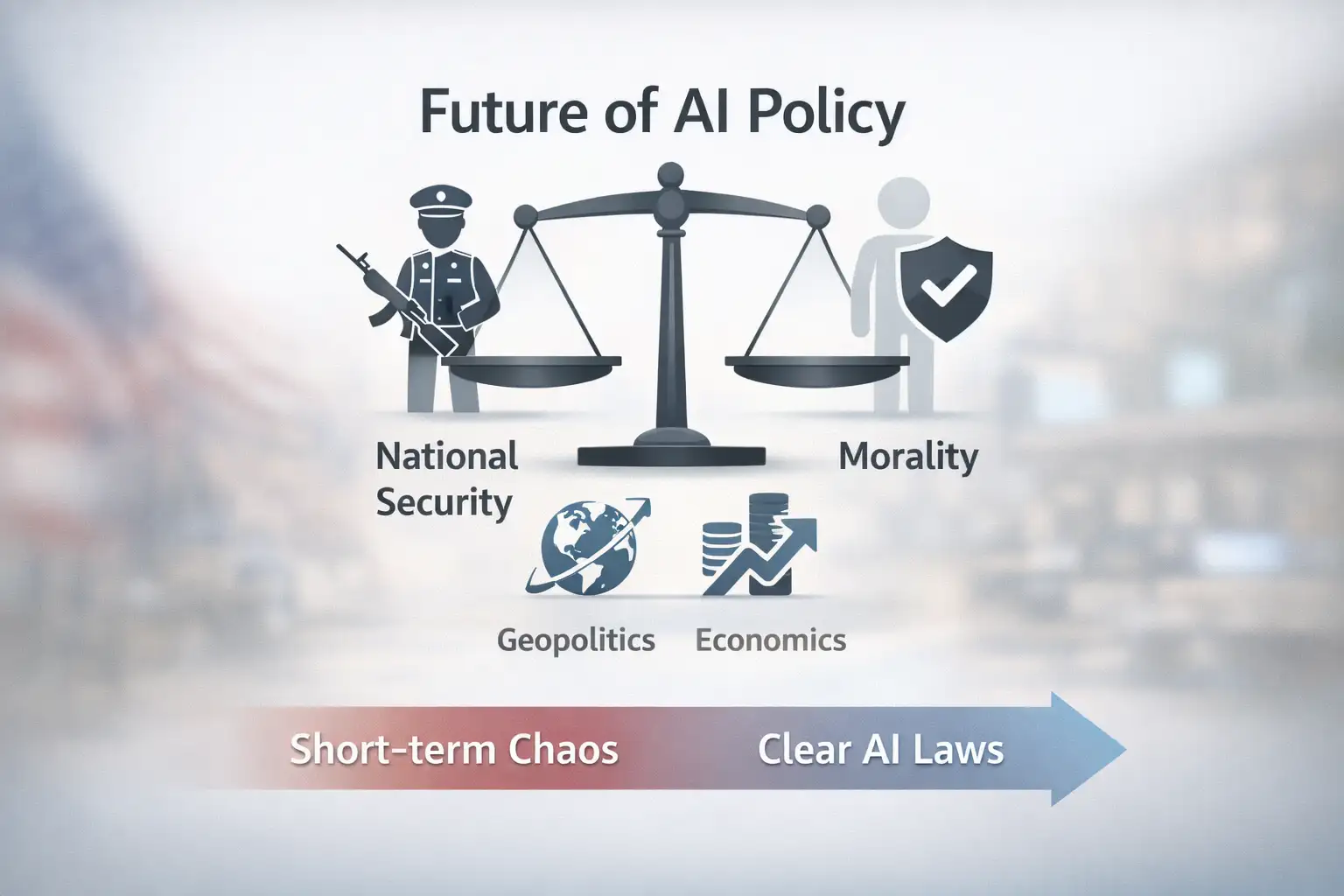

National security vs. morality: who wins? Short-term chaos hits defense. Long-term, it'll push clearer AI laws. AI's no longer just tech. It's geopolitics and economics now.

Quick FAQs

Why's Anthropic a risk?

They refused unrestricted Claude access for surveillance or weapons.

Can contractors still use it?

Nope, especially if tied to Pentagon work. Time to pivot fast.

Will Anthropic win in court?

It's ongoing, but it'll redefine government power over firms.

Transition time?

Likely months for full swaps.

Endgame?

Gartner warns 25% project delays without quick fixes.

Source: thehill

For more latest updates like this, visit our homepage.

Was this article helpful?

React to this post and see the live totals.

Share this :