UK Fines Reddit $19 Million for Children’s Data Misuse

Hoplon InfoSec

25 Feb, 2026

The time when social media sites acted like the "Wild West" is quickly coming to an end in the fast-paced world of digital privacy. In the past, platforms were very casual about who could join their site and how they handled their data. But with the rise of strict rules like the UK's Children's Code, the bar for safety has moved a lot.

This change from passive oversight to active, aggressive enforcement should wake people up. Companies can't just have a terms of service page anymore; they have to show that they are protecting their most vulnerable users. This change has recently led to a huge fine that is a landmark case for the industry.

UK Fines Reddit $19 Million for Collecting Data on Kids

The UK fines Reddit $19 million for collecting data on kids. The UK Information Commissioner's Office (ICO) made news recently when it fined Reddit about $19 million (£15.4 million). This wasn't a random choice; it was based on a thorough look at how the site handles the personal information of minors.

The main problem is that data belonging to kids under 13 is being used without permission. The investigation found that Reddit didn't really have a "gatekeeping" system that worked, at least not in a technical sense. The platform basically let algorithms use the personal information of young children because it didn't have good age-assurance measures in place.

This information was then used to create profiles and, in some cases, to show ads to specific people. This goes against the UK Data Protection Act, which says that a child's best interests must come before a company's business goals.

Why Was This $19 Million Fine Necessary?

What made this level of enforcement necessary? You might be wondering why a fine of $19 million was thought to be fair. The truth is that when platforms don't pay attention to age limits, they aren't just breaking a small rule; they are putting kids at risk of advanced data mining.

Reddit used a "self-declaration" model for a long time, which means they just believed what the user said. The ICO said that asking "Are you over 13?" is not enough for a platform with millions of users.

This fine is a way to make things right by reminding big tech companies that taking personal data from people who can't legally agree to it is a serious violation of trust and the law. It is about going from a "don't ask, don't tell" policy to a "verify and protect" rule.

What Modern Age Assurance Does and How It Works

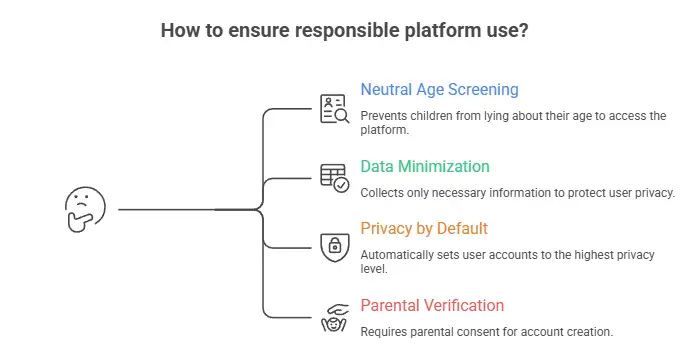

So, what does a responsible platform look like in the world today? To avoid these huge fines, businesses now have to use a number of technical layers:

• Neutral Age Screening: Using tools that don't make kids lie about their age to get in.

• Data Minimization: This means only collecting the least amount of information needed to do the job.

• Privacy by Default: Making sure that if a minor does join, their account is automatically set to the highest level of privacy so that their profile is not public.

• Parental Verification: Making a digital link that requires a parent or guardian to clearly say they agree to the account's existence.

Who is Really Affected by This Important Decision?

This fine will have effects on a number of groups:

1. The Younger Generation

Children in the UK will now have a lot harder time getting on platforms that aren't made for them, which makes their digital footprint much smaller.

2. Business Owners

If you run a digital service, it's clear that privacy is now an issue that needs to be dealt with at the "boardroom level." If you don't follow these rules, you could end up with debts that wipe out your annual profits.

3. Parents

The legal safety net is now much stronger. Parents can be more sure that the law is holding these platforms responsible for the safety of their kids.

Help from a Professional

It's very hard for any modern business to deal with the messy intersection of legal red tape and business growth. Hoplon Infosec comes in here. We give you the framework and the "security eyes" to make sure that your data processing is not only fast but also legal.

We help businesses build a reputation for trust instead of a history of fines by setting up strong audit trails and compliance checks.

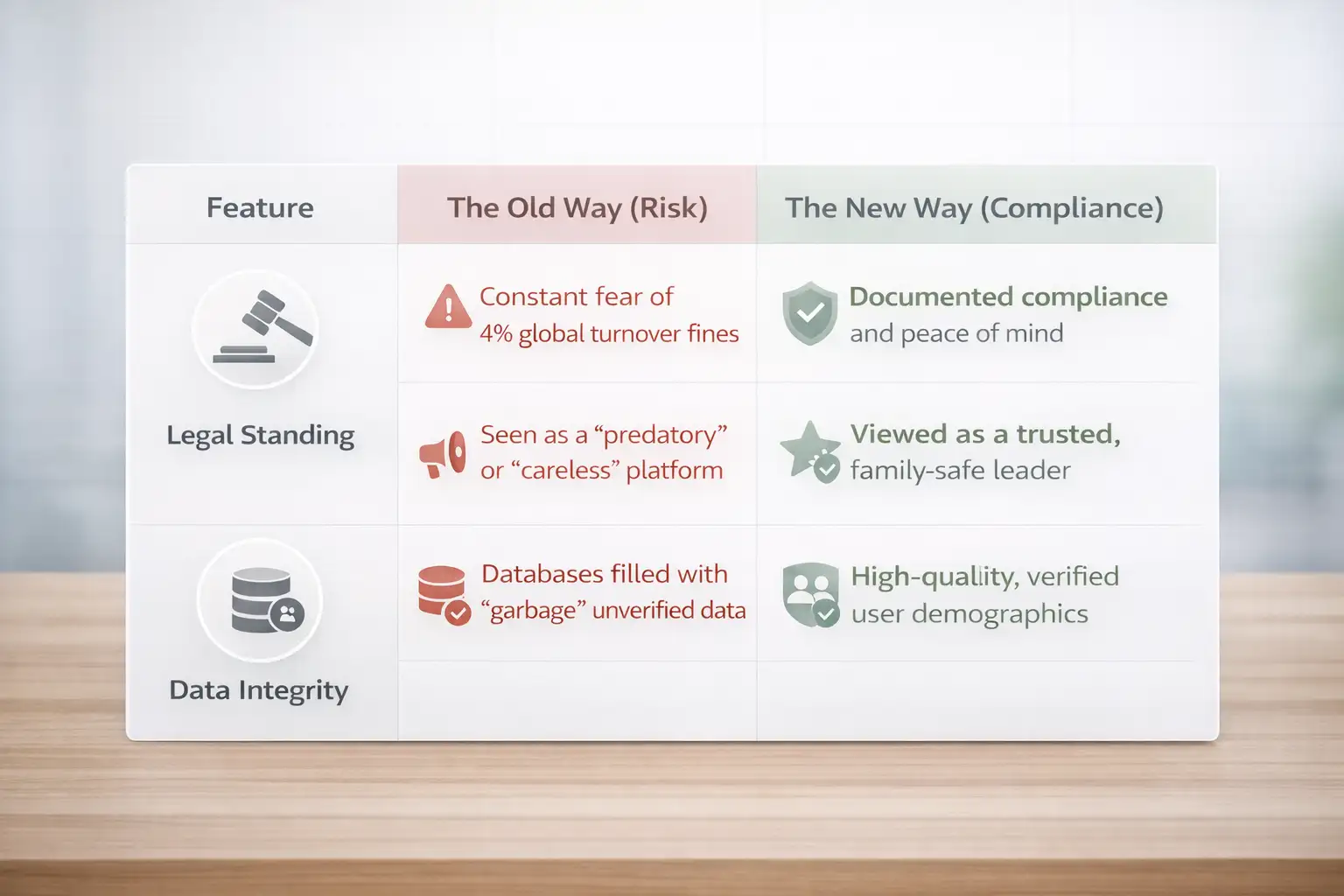

Outcomes That Can Be Measured: Doing It the Right Way table

What Should Your Business Do Next?

The first step for you as a decision-maker is to carefully look over how you collect data. If there is even a small chance that kids are using your service, you must have an age-verification gate.

You should also check your internal AI and machine learning rules to make sure that you aren't using any data from kids to train your models.

Frequently Asked Questions

Why was the fine set at $19 million?

The ICO doesn't just choose a number. They figure it out based on how bad the privacy breach was, how many kids were put in danger, and the company's total global revenue to make sure the fine hurts enough to make a difference.

Is this a problem only for businesses in the UK?

No way. The fine came from the UK, but platforms like Reddit that are used all over the world usually update their whole system to meet the highest standards. These rules apply to you no matter where your office is located if you have users in the UK or EU.

What is the "Children's Code"?

It is a list of 15 rules that explain how to make an online service safe for kids. It talks about everything from tracking where kids are to how "likes" and "streaks" keep them interested.

Summary for Executives

The $19 million fine against Reddit is a permanent change in the digital world. We are leaving behind a time when "growth at all costs" was okay, even if it meant taking data from kids. The main benefit is that the internet is much safer.

In this new era, Hoplon Infosec is here to help you. We help businesses deal with these complicated rules so that your data use stays a valuable asset instead of a ticking legal time bomb.

Author Credibility: A cybersecurity expert who studies international data privacy and how tech regulation has changed wrote this report.

Was this article helpful?

React to this post and see the live totals.

Share this :