WiFi Human Activity Recognition Through Walls Explained

-20260310150852.webp&w=3840&q=75)

Hoplon InfoSec

09 Mar, 2026

Can WiFi really detect body movement, posture, and even breathing through walls?

In limited but increasingly practical setups, yes. Carnegie Mellon University research on DensePose from WiFi and the public RuView project both show that Channel State Information, or CSI, can be used to estimate pose, motion, and vital signs without cameras, using deep learning and specialized WiFi hardware.

That matters now because the barrier to experimentation appears to be getting lower, while privacy and compliance rules have not kept pace.

Introduction

Traditional indoor monitoring usually meant cameras, wearables, or expensive radar and LiDAR.

Now, WiFi Human Activity Recognition Through Walls is moving some of that sensing work into ordinary wireless environments by reading how human movement changes radio signals. The result is a different kind of visibility.

No lens. No obvious sensor in the room. In some cases, still enough signal detail to infer pose, motion, breathing, and heart-rate ranges.

That is the main business takeaway. WiFi Human Activity Recognition Through Walls may reduce dependence on cameras in some settings, but it also creates a quieter surveillance surface.

For a business leader, that changes the conversation from “Is this an IoT feature?” to “Is this now part of our physical-layer threat model?” The answer, based on the public material now available, appears to be yes.

What It Is

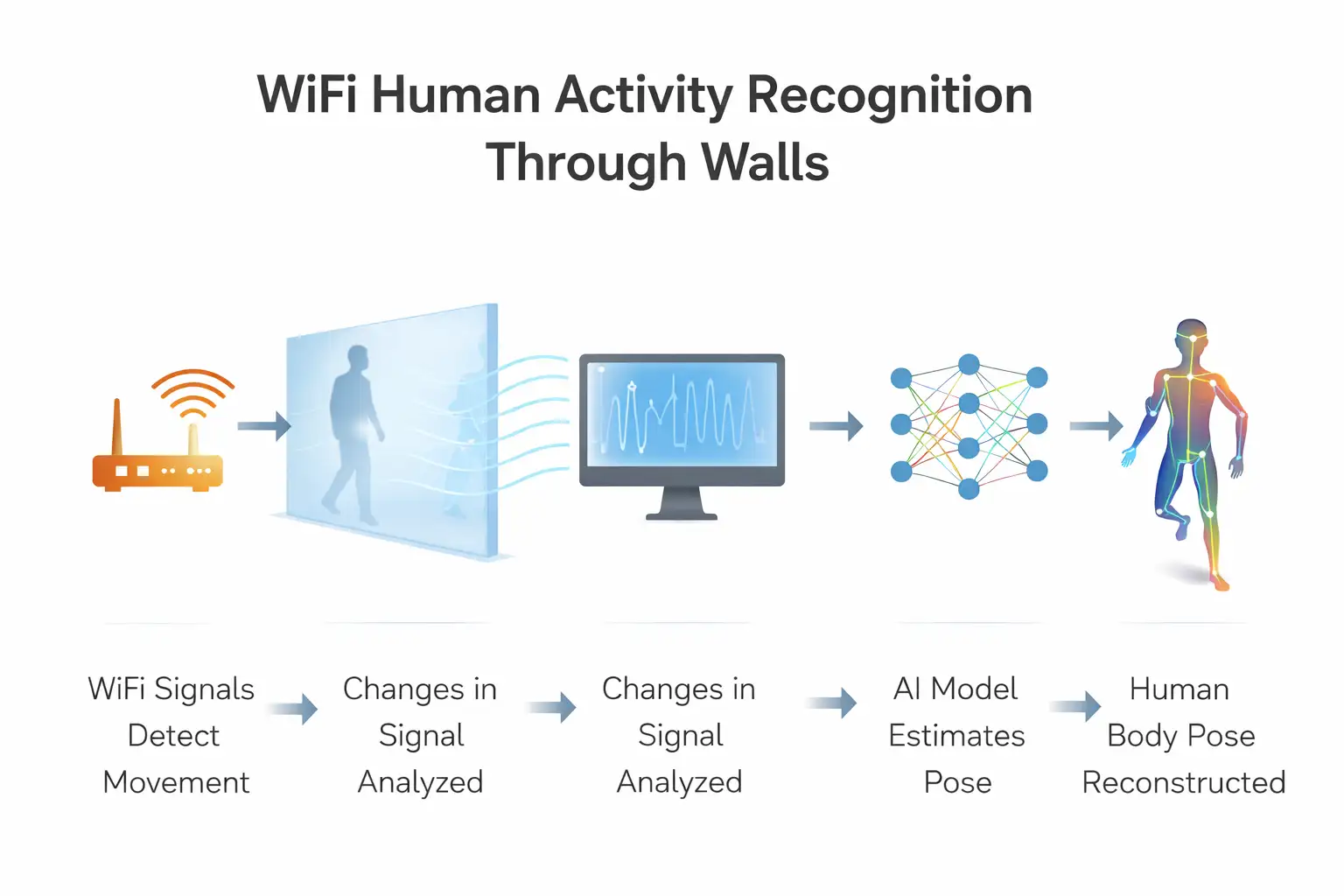

WiFi Human Activity Recognition Through Walls is a sensing approach that analyzes how human bodies disturb WiFi signals, especially CSI data, to estimate movement, position, posture, and sometimes vital-sign patterns. It does not require a camera feed, but it does require the right signal access and a trained model.

In plain English, your body changes the path of radio waves. A person walking, turning, breathing, or standing still causes small shifts in signal amplitude and phase.

Those shifts can be captured, cleaned, and translated into patterns that machine learning models can interpret. That is the scientific basis behind this field. It is not science fiction, but it is also not magic. The quality of the result depends heavily on hardware, room conditions, and model design.

This is also where a lot of online coverage gets sloppy. The strongest public claims are not about any random home router suddenly acting like X-ray vision.

The RuView repository itself says full pose estimation, vital signs, and through-wall sensing rely on CSI-capable hardware, such as ESP32-S3 nodes or research NICs. Standard consumer laptops generally provide only RSSI-level presence detection, which is much more limited. That distinction matters. A lot.

What Happened

A public GitHub project called RuView, published by Reuven Cohen, presents itself as an implementation of WiFi DensePose for real-time human pose estimation, vital-sign monitoring, and presence detection from WiFi signals.

The project documentation describes a Rust-based sensing pipeline, Docker deployment options, ESP32-S3 support, and multi-node sensing features. In other words, the concept is no longer confined to academic PDFs. It is being packaged as software others can inspect and run.

The underlying academic foundation is real as well. Carnegie Mellon University published work on dense human pose estimation from WiFi, and the associated paper explains that the model maps WiFi phase and amplitude to UV coordinates across 24 human body regions.

The same line of work positions WiFi as a cheaper and more privacy-friendly sensing option than cameras, LiDAR, or radar in some indoor scenarios, especially where occlusion and poor lighting undermine vision systems.

So the core story is not that a brand-new scientific principle was discovered yesterday. The bigger change is operational. Public code, deployment instructions, and lower-cost node-based setups make the research easier to test and potentially easier to misuse.

That shift from lab-only work to accessible implementation is what makes this topic important for security teams right now.

Why It Exists

The technology exists because cameras have obvious limits. They struggle with occlusion and lighting, and many people do not want cameras in bedrooms, clinics, care homes, or other sensitive spaces. WiFi sensing tries to solve that by using radio signals that can work without light and, in some cases, through nonmetallic barriers.

The Carnegie Mellon research says this quite clearly. Camera-based pose estimation is affected by poor light and occlusion. LiDAR and radar can be costly and power-hungry. WiFi, by contrast, is already common indoors and may provide a lower-cost path for human sensing. That makes the technology attractive for elder care, home monitoring, occupancy, and safety scenarios where camera deployment is sensitive or impractical.

But there is a second reason, and it is less comfortable. Passive or near-passive sensing is attractive precisely because it can be less visible than cameras.

The USENIX Security paper on public perceptions of RF sensing notes that people were often unaware of what RF sensing could do until it was explained to them. It also warns that describing RF sensing as privacy-preserving can be misleading because it can reveal motion, identity, and other sensitive signals without visual capture.

How It Works

The pipeline usually starts with CSI capture, then signal cleaning, feature extraction, and finally a model that predicts body pose or activity from those patterns. In the public RuView documentation, this includes amplitude and phase processing, DensePose-style mapping, and optional multi-node fusion for better coverage.

At the signal level, WiFi uses OFDM and spreads data across multiple subcarriers. Human movement disturbs those subcarriers. RuView describes extracting amplitude and phase changes from CSI and processing them at high speed in Rust, with the repository listing a 54,000 frames per second full CSI pipeline in its benchmark section.

The academic paper similarly explains that sanitized CSI is translated into image-like feature maps and then passed to a modified DensePose-RCNN architecture.

The pose output is not just a simple “person detected” flag. The CMU work says the system maps signal information to UV coordinates in 24 body regions, which is why people describe it as dense pose rather than coarse localization. That is a meaningful difference. A motion detector tells you something moved. A dense-pose approach tries to infer how a body is arranged in space.

RuView also documents parallel vital-sign extraction. Its public material states that bandpass filtering at 0.1 to 0.5 Hz targets breathing in the 6 to 30 BPM range, while 0.8 to 2.0 Hz targets heart rate in the 40 to 120 BPM range. Those are implementation claims from the repository, not clinical validation claims, so they should be understood as technical capability statements rather than medical-grade guarantees.

Old vs New

Traditional monitoring tools and WiFi Human Activity Recognition Through Walls do not solve the same problem in the same way.

Cameras provide direct visual evidence, but they need line of sight, raise immediate privacy concerns, and are easier for people to notice and regulate.

Radar and LiDAR can perform strong sensing tasks, but the CMU work notes their cost and power requirements can be higher than WiFi-based alternatives.

WiFi sensing can work in darkness and through some barriers, and in some setups it may reuse existing wireless conditions. But it usually needs specialized CSI access and can create a surveillance channel that occupants may never see.

That is the real USP here, though it is not a marketing slogan. This approach can shift monitoring from visible optics to invisible radio interpretation. For operators, that can mean less hardware friction. For privacy teams, it can mean a harder governance problem.

Example

Before this kind of setup, a warehouse manager wanting richer motion intelligence had a few obvious choices. Install cameras. Add wearables. Invest in radar. Each route comes with tradeoffs: privacy objections, employee adoption issues, or hardware cost. That is the old way.

Now picture a multi-node CSI-capable setup. RuView’s public docs describe 4 to 6 low-cost nodes, 12 or more overlapping paths, 360-degree room coverage, and sub-inch accuracy claims for some multistatic scenarios.

In the same repository, four ESP32-S3 nodes are described as providing 12 transmitter-receiver links, with channel hopping and two-person tracking at 20 Hz in the documented pipeline. That is the new way, at least in experimental or controlled deployments.

The result is not necessarily “better than cameras.” It is different. You may get through-obstacle sensing and lower visual privacy exposure, but you also create a monitoring method that staff, visitors, or residents might not recognize at all. In an office, hospital, shelter, or residential building, that could change consent, disclosure, and policy requirements overnight.

Who It Affects

This affects more than engineers. It matters to security teams, compliance leaders, facilities managers, healthcare operators, smart-building vendors, and ordinary occupants whose movement or vital-sign data could be inferred without a camera in sight.

For regular users, the issue is awareness. Most people understand a camera. Fewer understand CSI, RF sensing, or what can be inferred from wireless signal disturbances. The USENIX study found that many participants were initially unaware of RF sensing capabilities and became concerned once the full picture was explained.

For businesses, the issue is exposure and governance.

Security teams need to treat RF sensing as a possible physical-layer risk, especially in sensitive spaces.

Compliance teams need to consider whether location, tracking, and fingerprinting data could qualify as personal data under privacy law.

Healthcare, care-home, and smart-building operators need to weigh utility against consent, transparency, and misuse risk.

For executives, the big point is simple. If a system can infer presence, movement, or biometric patterns from radio signals, it belongs in both your cyber risk register and your privacy impact process.

Pros and Limits

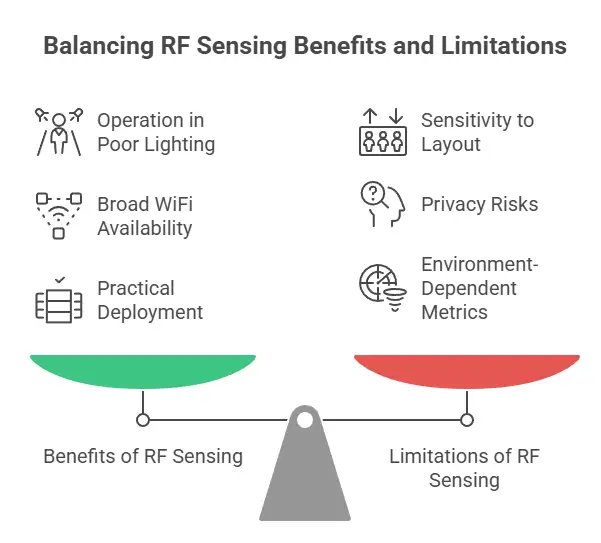

There are real benefits. The CMU work and related RF sensing research point to several: operation in poor lighting, resilience to some forms of occlusion, broad indoor availability of WiFi hardware, and privacy advantages over visual capture in certain contexts. The RuView repository adds practical deployment benefits such as Docker packaging, edge processing, and offline-capable modes in some documented modules.

There are also measurable performance claims in the public materials. RuView lists a 54,000 fps CSI pipeline benchmark in Rust, under 1 ms UDP latency on a local network for ESP32-S3 streaming, and reliable 3-meter through-wall presence detection in one hardware section.

Elsewhere in the repository, a disaster-response module references 3 to 5 meter penetration for localization through rubble layers. These are repository claims and should be treated as environment-dependent implementation metrics, not universal field guarantees.

The limitations are just as important. First, not all “ordinary WiFi” equipment exposes CSI in a usable way. Second, RF sensing can be highly sensitive to layout, materials, interference, and device placement. Third, a privacy-friendly label may be incomplete.

The USENIX study explicitly argues that RF sensing can create risks similar to cameras and, in some situations, additional ones because it works through barriers and collects multiple sensitive signal types at once.

What to Do

You do not need to panic. You do need to update your assumptions.

Review whether your high-sensitivity spaces rely on the belief that “no camera” means “no sensing.” That assumption may no longer hold.

Inventory CSI-capable or rogue ESP32-class devices in sensitive environments and near access points where unauthorized sensing could be staged.

Add RF sensing to privacy impact assessments, especially where movement, occupancy, or biometric inference could affect employees, patients, visitors, or residents.

Consider shielding, segmentation, and physical inspection controls in spaces handling confidential meetings, research, healthcare, or regulated operations. These are prudent mitigations when passive sensing risk is plausible.

This is also the kind of issue where a specialist security partner can help without turning it into drama. A firm such as Hoplon Infosec could be useful in the practical middle ground: RF exposure review, rogue-device detection, privacy-by-design assessment, and policy alignment for smart-space deployments. That is not about hype. It is about treating wireless sensing as both a business tool and a risk surface.

Hoplon Insight

If your organization is exploring smart buildings, occupancy analytics, elder-care monitoring, or contactless sensing, start with governance before rollout.

Ask four questions. What data is being inferred, not just collected? Who may be affected besides the intended user? Can occupants reasonably understand the sensing? And if a third party planted or repurposed hardware, how would you know?

Those questions tend to separate mature deployments from messy ones.

FAQ

Can WiFi Human Activity Recognition Through Walls really detect people behind walls?

Yes, under specific conditions. Research from Carnegie Mellon University and the RuView documentation both support pose or presence inference from WiFi-based sensing, but the setup depends on CSI-capable hardware, training, and environment quality.

Does this work with any normal home router?

Not fully. RuView states that full pose, vital-sign, and through-wall features require CSI-capable hardware such as ESP32-S3 nodes or research NICs. A standard laptop on ordinary WiFi is described as much more limited.

Is WiFi sensing safer for privacy than cameras?

Sometimes, but not automatically. It removes visible images, which many people prefer in private spaces, yet RF sensing can still infer sensitive information and can work through physical barriers. The privacy tradeoff is real, not one-sided.

Is this already regulated like video surveillance?

Not in a neat way. Data protection guidance makes clear that WiFi tracking and fingerprinting can involve personal data and GDPR obligations, but RF sensing-specific controls are still uneven compared with mature camera rules.

What is the biggest business risk?

The quietness of the sensing channel. A visible camera starts a conversation. RF sensing may not. That gap can create policy, consent, insider-risk, and rogue-device problems before leadership realizes the exposure exists.

Conclusion

WiFi Human Activity Recognition Through Walls is no longer just a research curiosity. Verified public sources show that WiFi-based dense pose estimation, presence sensing, and vital-sign extraction are technically plausible with the right CSI-capable hardware, signal processing, and models. The strongest near-term implication is not only new product capability. It is a new category of invisible monitoring risk.

For decision-makers, the smart move is balanced realism. Do not dismiss the technology because it sounds futuristic. Do not oversell it as a universal camera replacement either. Treat it as a growing sensing layer that could help in safety, care, and automation, while also demanding tighter privacy review, stronger wireless asset control, and more mature governance. That is where the real value sits.

Summary

The USP here is subtle but important: this approach can infer movement and body-state information without visible imaging hardware, which may reduce some camera-related friction while creating a different privacy and security challenge.

The main benefit is contactless sensing in low-light or obstructed environments. The brand capability angle is practical: organizations that want to assess this exposure, design defensible controls, or evaluate smart-space risk would benefit from a security-led review, the kind of engagement a provider like Hoplon Infosec can support.

Trusted References

Carnegie Mellon University, Dense Human Pose Estimation From WiFi.

RuView GitHub repository and documentation.

AEPD guidance on Wi-Fi tracking technologies and GDPR.

For more latest updates like this, visit our homepage.

Was this article helpful?

React to this post and see the live totals.

Share this :