OpenAI Codex Security Finds & Fixes Vulnerabilities Fast

Hoplon InfoSec

08 Mar, 2026

Beginning

Imagine that you are a developer looking over thousands of lines of code at night. Everything seems to be fine. The tests are passed. The item is shipped.

A few weeks later, a security researcher finds a big hole in that codebase that makes it very unsafe. A small mistake in logic. But it makes it easier for attackers.

Many teams don't want to admit that this happens more often than they think. Modern software is huge. Security checks can't keep up.

OpenAI Codex Security was made to fix that exact problem.

In the past, developers used static scanners and manual security checks. They worked, but they were slow and often set off false alarms. A new model is now coming out. AI systems look at whole repositories, pretend to attack them, and even suggest fixes.

Old way: checking code by hand and using static scanning

New way: finding vulnerabilities with AI

The result is faster security detection and less risk.

This change is important for companies that make a lot of software.

Main Points

• OpenAI Codex Security uses AI to look at code repositories and find software vulnerabilities on its own.

• The system looks at commits, project structure, and dependencies to find security holes.

• It can try to confirm vulnerabilities before reporting them to cut down on false positives.

• Some test reports say that the system found thousands of serious problems in open-source projects.

• The technology helps DevSecOps by adding security analysis to the development process.

• People still check the results and approve the fixes.

• Companies like Hoplon Infosec, which is a security consulting firm, help businesses use AI security tools safely.

Quick Answer

What does OpenAI Codex Security do?

OpenAI Codex Security is an AI-powered application security system that looks at code repositories, finds security holes, checks for possible exploits, and suggests fixes for developers.

The goal is to automate some parts of software security research and help teams find risks earlier in the development process.

Security researchers say that AI tools can help find vulnerabilities faster, but they still need to be watched by experts.

What It is

What is the security of OpenAI Codex?

OpenAI Codex Security is an AI system that uses large language model reasoning and automated analysis techniques to look at software repositories and find security holes.

In simple terms, it's like a digital security analyst that reads code like a pro developer would.

It looks at patterns. It gets how logic flows. After that, it looks for mistakes that attackers could use.

Several cybersecurity reports say that the system works a lot like an automated vulnerability researcher. It looks through big codebases, finds possible problems, and suggests ways to fix them.

That method is different from older security scanners that mostly used rules for matching patterns.

Why It Exists

The reason for OpenAI Codex Security

Software is now very complicated.

Modern apps have thousands of dependencies, millions of lines of code, and automated pipelines that push out updates all the time.

Because of how complicated this is, traditional security reviews have a hard time keeping up.

There were a few things that made the industry want solutions like OpenAI Codex Security:

• Open-source software is growing quickly.

• More and more attacks on the software supply chain

• Not enough experienced security engineers

• Codebases that are too big to check by hand

More and more, security teams use automation to help human analysts.

AI systems add another level of analysis that can look at huge codebases faster than a person could do on their own.

Types of Analysis

OpenAI Codex Security Analysis has different types or groups.

OpenAI Codex Security's analysis usually fits into a few different groups, but the specifics depend on how it is used.

Structure of the Code

Looking at how the code is put together

The system looks at the architecture of the application and finds strange patterns or logic structures that aren't safe.

Risks of Dependency

Finding weaknesses in dependencies

A lot of software bugs come from libraries that other people make. AI analysis can look at chains of dependencies and find risks.

Commit Review

Investigating commit history

Looking at old commits can help find code changes that could be dangerous and lead to security holes.

Behavior Tests

Simulating behavior

Some systems try to mimic how attackers might take advantage of weaknesses that have been found.

These methods help create a complete picture of security instead of just checking rules.

Causes

Causes or Risk Factors in Software Weaknesses

Knowing why vulnerabilities happen helps us understand why tools like OpenAI Codex Security are useful.

Most of the time, software bugs come from simple mistakes made during development, not from people trying to do harm.

Some of the most common reasons are:

• Mistakes in the logic of authentication workflows

• Not checking input properly

• Issues with handling memory

• Permissions that aren't set up right

• Libraries from third parties that aren't safe

This is easier to understand with a real-life example.

Think of a web app that lets you upload files. An attacker could upload a harmful script if the code doesn't check file types correctly.

A quick look by a human reviewer might miss this problem. Automated AI analysis might flag it because the pattern looks like known weaknesses.

Warning Signs

Signs or indicators of security flaws

Security flaws don't often make themselves clear.

But developers and security teams often see small warning signs.

• Applications that don't work as expected

• Error messages that show private data

• Authentication checks that aren't always the same

• Requests from the network that seem fishy

• Logs that don't make sense

AI systems like OpenAI Codex Security try to find these signals in code before they turn into security holes in production.

How It Works

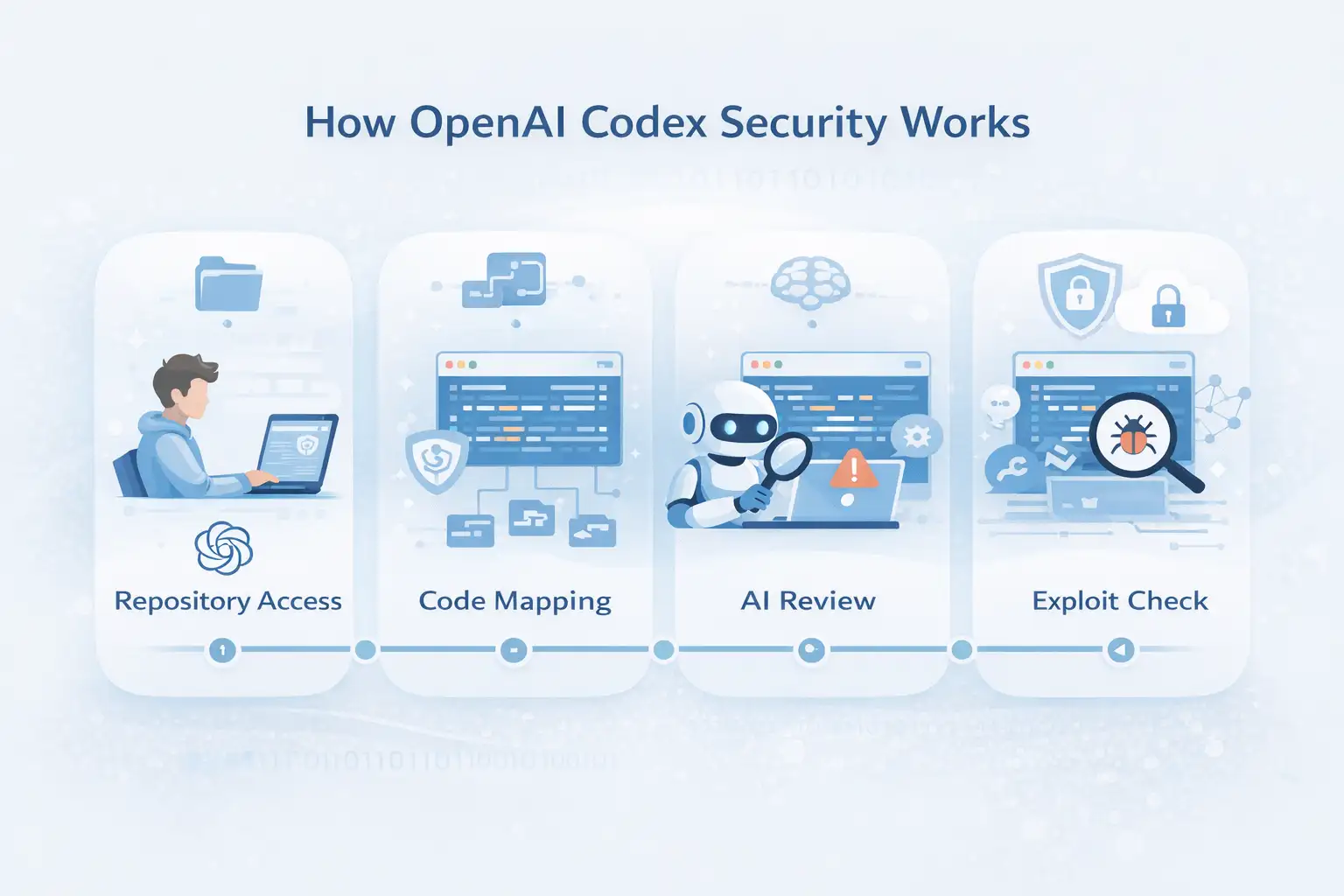

OpenAI Codex Security's Step-by-Step Methods

Even though the technical details of how AI security systems work differ, most of them follow a set process.

Getting a repository

Developers give people access to a project repository so they can look at it.

Indexing code

The system maps out the whole code structure so it can see how files and functions are related to each other.

AI reasoning analysis

The model checks the logic of the code and finds any possible flaws.

Validation of exploit

Some systems try to create safe environments where exploitation can happen.

Lastly, the AI suggests changes to the code to fix the problems it found.

Every step helps make sure that vulnerabilities are found correctly.

Safe Use

A lot of the time, security teams ask an important question.

Are AI security tools safe to use?

Experts say that OpenAI Codex Security works best when people are in charge of it.

Using it correctly usually means:

• AI helped find vulnerabilities.

• Checking the results with people

• Controlled patch deployment

• Constant checking

Before applying patches, many companies hire outside security experts to check the results of AI.

Companies that offer security consulting, like Hoplon Infosec, can help businesses figure out how to add AI-driven security scanning to their development environments.

Common Mistakes

Things to stay away from that are wrong or harmful

AI tools are very useful, but using them the wrong way can make things more dangerous.

A common mistake is thinking that AI analysis is always right.

AI systems can sometimes miss complex vulnerabilities or give false positives.

Another risk is depending too much on automation.

When developers skip manual review, they might accidentally cause problems when they apply automated patches.

Lastly, companies sometimes use security tools without setting them up correctly.

That can lead to false results or alerts that aren't needed.

It is still important to use things in moderation.

Expert Help

Managing security gets a lot harder for big companies.

Companies that deal with financial systems, health records, or sensitive infrastructure need expert security checks.

When you need professional help, advanced vulnerability testing is needed for large codebases.

• Rules for compliance apply.

• You need to plan for how to respond to incidents.

• Security teams don't have the right skills.

This is where cybersecurity companies that focus on this area come in.

Companies like Hoplon Infosec help businesses find weaknesses, test their security, and do AI-assisted security analysis.

Advanced Methods

More and more, modern security operations use more than one method.

OpenAI Codex Security and other AI analysis tools are only one part.

Some advanced methods could be:

• Testing for penetration

• Making models of threats

• Security tests with a red team

• Safe ways to review code

• Systems for ongoing monitoring

Using more than one of these tools together gives you better protection than just using one.

Prevention

It's still better to stop weaknesses from happening in the first place than to fix them later.

Most of the time, security teams suggest a number of ways to stay safe.

First, use safe coding practices. During development, developers should follow security rules that have already been set.

Second, make security checks automatic during the CI/CD pipeline.

Third, check dependencies for security holes on a regular basis.

Finally, keep training programs on security going for development teams.

Using tools like OpenAI Codex Security along with these practices makes it more likely that problems will be found early.

When and what to expect

When companies start using AI security tools, people usually start using them slowly.

At first, teams run the system in observation mode so they can look over the results without making any changes.

Then developers start to add suggestions to their work processes.

The technology becomes a part of ongoing security monitoring over time.

Depending on the size of the company and the complexity of the system, the process can take months.

FAQs

What is the purpose of OpenAI Codex Security?

OpenAI Codex Security uses AI to look at software repositories and find security holes, then suggests ways to fix them.

Can AI security tools take the place of human security engineers?

No, AI tools help with security analysis, but they still need to be checked and approved by an expert.

Does OpenAI Codex Security fix code on its own?

Some implementations make suggestions for patches. Before deployment, developers must look over and approve changes.

Do AI vulnerability scanners work?

They can be helpful, but they're not perfect. Security experts say that you should use them with manual review.

Who gets the most out of AI security tools?

Big software companies, tech platforms, and businesses that have to deal with complicated codebases get the most out of it.

Useful Tips

If your company wants to use AI security tools, think about these useful tips.

Begin small.

Before using AI analysis more widely, test it on internal repositories.

Write down your findings carefully.

Like any other security report, AI outputs should be tracked and checked for accuracy.

Teach development teams.

Understanding how AI tools work makes them easier to use and less confusing.

And maybe most importantly, keep an eye on things.

Even the most advanced systems still need security experts who have been around for a while.

In conclusion,

As apps get bigger and more complicated, it gets harder to keep software safe.

AI tools like OpenAI Codex Security have changed a lot about how bugs are found and fixed.

AI can now help development teams look through repositories, find possible problems, and suggest fixes instead of just relying on manual reviews.

But automation isn't enough on its own.

Companies usually get better results when they use AI analysis along with professional cybersecurity knowledge.

Security experts like Hoplon Infosec keep an eye on things and make sure that businesses use these tools correctly and follow the rules.

Smart systems and human analysts will probably have to work together more in the future to keep software safe.

And in the end, that partnership could make software safer for everyone.

Sources

OpenAI's official statements

Cybersecurity research papers about using AI to find weaknesses

Hoplon Insight

Companies that use AI security tools should use both automated scanning and expert checking.

Hoplon Infosec helps companies set up safe DevSecOps pipelines, find weaknesses, and use AI security tools in a way that is safe.

The Author's Trustworthiness

This article was written by a cybersecurity editorial team that focuses on software security, finding vulnerabilities, and DevSecOps practices for businesses.

Last Updated: 08/03/2026

For more latest updates like this, visit our homepage.

Was this article helpful?

React to this post and see the live totals.

Share this :