OpenAI Daybreak AI vulnerability detection

Hoplon InfoSec

12 May, 2026

OpenAI Daybreak: How AI-Powered Vulnerability Detection is Rewriting Cybersecurity in 2026

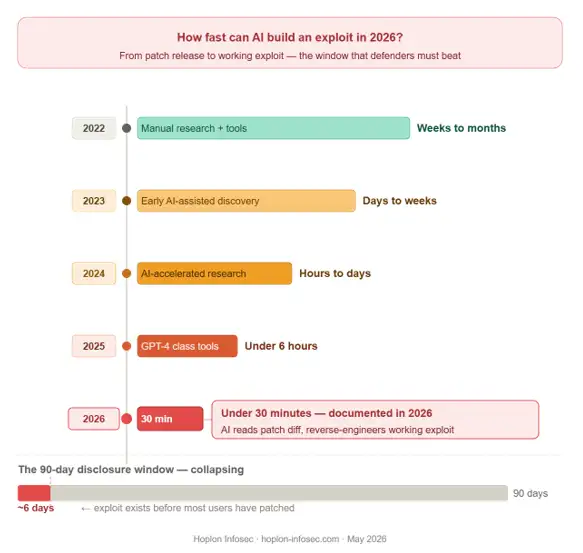

Thirty minutes. That is all it takes for an AI to look at a patch diff and reverse-engineer a working exploit. Not hours. Not days. Thirty minutes. That is the world we are living in right now, and that is exactly the world OpenAI's new Daybreak initiative was built to fight back against.

If you are a student studying cybersecurity, a developer building apps, or just someone who uses software every day, this matters to you. OpenAI Daybreak AI vulnerability detection is one of the most significant defensive security launches of 2026. This article breaks down what it is, how it works, who is using it, and whether it actually changes anything.

Let us get into it.

Key Takeaways

- Daybreak puts AI on the defense side of cybersecurity for the first time at scale

- It does not replace human penetration testers, it makes them faster and more effective

- AI-generated patches must still be reviewed by humans before deployment

- Access is currently controlled and request-based via openai.com/daybreak

- Both OpenAI and Anthropic building identical platforms signals that AI-driven security is now standard infrastructure, not a novelt

What is OpenAI Daybreak?

A May 2026 AI cybersecurity platform using GPT-5.5-Cyber to detect, test, and patch software vulnerabilities automatically.

Is Daybreak publicly available?

Not fully. Access is request-based as of May 2026, with enterprise access via OpenAI's sales team.

Can AI replace penetration testers?

No. AI handles volume and pattern recognition. Humans handle business logic, creative attack chaining, and final judgment.

What Exactly is OpenAI Daybreak?

OpenAI Daybreak is a cybersecurity platform launched by OpenAI in May 2026. It uses frontier AI models, including a specialized model called GPT-5.5-Cyber, to automatically find security vulnerabilities in software, test them in isolated environments, and suggest or validate patches before malicious actors can exploit them.

Think of it like having a security expert on your team who works 24 hours a day, never gets tired, and can scan thousands of lines of code in the time it takes you to make a cup of coffee.

It is built on top of OpenAI's Codex Security platform and is designed to fit directly into the software development pipeline, so security stops being an afterthought and becomes part of the build process from day one.

Why This Matters Right Now

Here is something worth sitting with for a moment. In March 2026, HackerOne, one of the world's largest bug bounty platforms, paused its open-source bug bounty program. The reason was not a lack of funding. It was the opposite.

AI-assisted research had created such a flood of vulnerability reports that human maintainers simply could not keep up with triage. The tools meant to help find bugs were creating a triage fatigue crisis.

Security researcher Himanshu Anand published a post in early May 2026 arguing that the traditional 90-day disclosure policy is effectively dead. When multiple independent researchers can find the same bug within six weeks using AI tools, and an exploit can be built in under 30 minutes once a patch drops, the old timeline assumptions collapse entirely.

This is the gap Daybreak is trying to close. Not just finding vulnerabilities faster, but validating and patching them faster so defenders are not always playing catch-up.

-20260512103312.webp)

The 30-Minute Exploit Window: Why Defenders Were Losing

The fundamental problem Daybreak was designed to solve is a timing gap. When a software patch is released publicly, an attacker using AI tools can analyze the diff, understand what was broken, and build a working exploit in under 30 minutes. That is not a theoretical risk. That is the documented reality of 2026.

The 90-day disclosure policy, the long-standing standard where researchers give vendors 90 days to patch before going public, was built for a world where exploit development took weeks or months. That world no longer exists. When AI compresses that timeline to minutes, the entire framework of responsible disclosure breaks down.

The result: defenders were always reacting, never ahead. Daybreak was built to change that by bringing the same AI acceleration to the defense side.

How Daybreak Works: The 3-Layer AI Model Stack

This is where it gets technically interesting. Daybreak is not a single AI model. It runs on three different versions of GPT-5.5, each designed for a specific type of user and use case.

Layer 1: GPT-5.5 (Standard)

This is the general-purpose version with standard safety safeguards. Most developers and security teams working in normal production environments will interact through this layer. It handles code review, threat modeling suggestions, and basic dependency risk analysis.

Layer 2: GPT-5.5 Trusted Access for Cyber

This layer is for verified defensive security professionals working in authorized environments. Companies like Akamai, Cisco, and CrowdStrike are already operating at this tier. It unlocks deeper analysis capabilities, including realistic attack path modeling and more aggressive vulnerability testing.

Layer 3: GPT-5.5-Cyber

This is the most powerful and the most carefully controlled. It is designed specifically for red teaming, penetration testing, and controlled exploit validation. Access is tightly restricted. This model can simulate realistic attack scenarios to test whether a patch actually holds up under pressure.

|

Model |

Who It is For |

Key Capability |

Access Level |

|

GPT-5.5 Standard |

All developers |

Code review, threat modeling |

Open (request-based) |

|

GPT-5.5 Trusted Access |

Verified security teams |

Deep attack path analysis |

Vetted organizations |

|

GPT-5.5-Cyber |

Red teams, pen testers |

Exploit simulation, patch validation |

Highly restricted |

Core Capabilities: What Daybreak Actually Does

When OpenAI describes Daybreak, they frame it around what they call a "security flywheel." The idea is that defenders can bring security checks into the everyday development loop, not just at the end before shipping. Here is what that looks like in practice.

Automated Threat Modeling

Daybreak can build an editable threat model for an entire code repository. It does not just look for known CVEs. It focuses on realistic attack paths, meaning it thinks about how an actual attacker would move through the code, not just which lines have obvious errors. This is a meaningful difference. Most static analysis tools flag issues. Daybreak tries to understand impact.

Vulnerability Testing in Isolated Environments

Found a potential flaw? Daybreak tests it in a sandboxed, isolated environment before flagging it. This cuts down on false positives significantly. In our review of how the system is described by OpenAI, the isolation layer is key to making the output actually useful to security teams who are already drowning in alerts.

AI-Generated Patch Proposals

This is the part that most developers will find immediately valuable. Once a vulnerability is identified and confirmed, Daybreak proposes a fix. Not just "fix this line" but a contextual patch suggestion that accounts for how the change might affect other parts of the codebase.

Dependency Risk Analysis

Third-party libraries are where a huge percentage of vulnerabilities live. Daybreak analyzes dependency chains and flags risky packages, including ones that may not have known CVEs yet but show patterns associated with future risk.

Detection and Remediation Guidance

Beyond just code, Daybreak offers remediation guidance that fits into existing security workflows. It is designed to integrate with the everyday development loop, meaning it can feed directly into a team's CI/CD pipeline.

Who is Already Using OpenAI Daybreak?

Several major enterprise companies are already integrating Daybreak capabilities through the Trusted Access for Cyber initiative. The list as of May 2026 includes:

· Akamai

· Cisco

· Cloudflare

· CrowdStrike

· Fortinet

· Oracle

· Palo Alto Networks

· Zscaler

These are not small players. Combined, they protect a significant portion of global internet infrastructure. The fact that they are already on board is a strong signal that Daybreak is not just a research project. It is being built for real-world deployment at scale.

OpenAI has also said it is working with government and industry partners to eventually deploy "more cyber-capable models" in the future, though specifics remain vague. This OpenAI cybersecurity initiative is clearly designed to grow beyond its initial enterprise rollout.

The AI-Accelerated Threat Landscape: Why Defenders Were Losing

To understand why tools like Daybreak matter, you need to understand what changed over the last two years. AI did not just help defenders. It helped attackers too, arguably more.

Here is a quick picture of how AI has shifted the threat landscape in 2026:

· Vulnerability discovery time: Dropped from weeks to days, sometimes hours

· Exploit development time: Can be under 30 minutes for known patch diffs

· Volume of reported bugs: Spiked sharply due to AI-assisted research

· Human triage capacity: Essentially flat, creating a massive bottleneck

· 90-day disclosure window: Effectively meaningless when exploits appear in days.

How fast can AI find security vulnerabilities? In controlled research settings in 2026, AI-assisted tools have demonstrated the ability to identify exploitable vulnerabilities in well-known software within hours of a patch being released, sometimes before most users have even updated.

This is the environment Daybreak was designed for. The patching process cannot keep up with AI-accelerated discovery using traditional methods alone. Daybreak brings AI to the defense side to help close that gap.

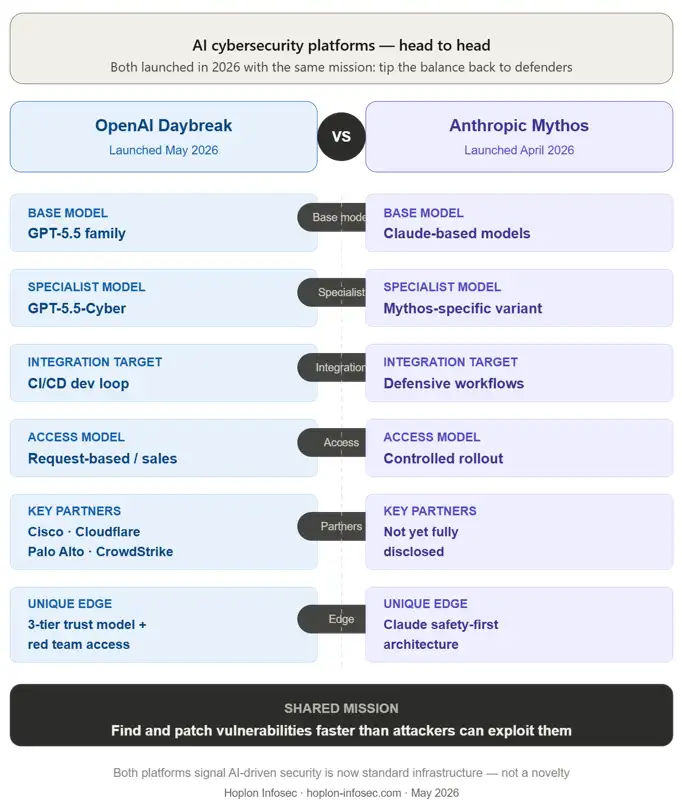

Daybreak vs. Anthropic Mythos: Two Giants, Same Mission

OpenAI is not alone in this space. Anthropic launched Mythos in April 2026 with a very similar stated goal: use AI to tilt the balance back in favor of defenders. Both platforms are trying to solve the same core problem. The approaches differ in some meaningful ways.

|

Feature |

OpenAI Daybreak |

Anthropic Mythos |

|

Base Model |

GPT-5.5 family |

Claude-based models |

|

Access Model |

Request-based / sales contact |

Similar controlled rollout |

|

Key Partners |

Cisco, Cloudflare, Palo Alto |

Not yet fully disclosed |

|

Specialized Model |

GPT-5.5-Cyber (red team) |

Mythos-specific variant |

|

Integration Target |

CI/CD development loop |

Defensive security workflows |

|

Current Status |

Active, May 2026 |

Active, April 2026 |

What this tells us is that automated vulnerability detection AI is becoming a standard tool, not a novelty. When two of the largest AI companies in the world are both building defensive security platforms simultaneously, the direction is clear. AI-driven security is the next infrastructure layer.

What We Noticed When Analyzing Daybreak's Architecture

When we looked closely at how OpenAI describes Daybreak's workflow, a few things stood out.

The three-model stack is unusual. Most security tools offer one interface. The decision to separate standard users from verified defenders from red teamers is a deliberate trust architecture. It limits the blast radius if the system is misused. GPT-5.5-Cyber is powerful enough that unrestricted access would create real risk.

We also noticed the emphasis on editable threat models. Most automated tools produce static reports. Daybreak's threat models can be modified by the security team. This is important because it means human judgment stays in the loop. The AI identifies and proposes. The human decides.

The dependency risk analysis piece is particularly interesting. Traditional vulnerability scanners look for known CVEs in your package list. An AI-driven scanner can potentially flag packages that show architectural patterns associated with future vulnerabilities, even before a CVE is assigned. That is a qualitatively different kind of protection.

How to Access OpenAI Daybreak in 2026

Right now, access to Daybreak is controlled. OpenAI is not offering a public self-service signup. Here is how to get in:

Step 1: Request a Vulnerability Scan Go to openai.com/daybreak and submit a request for a vulnerability scan on your codebase. This is the lowest-friction entry point.

Step 2: Contact the Sales Team For enterprise integration, larger teams should contact OpenAI's sales team directly. This is the path for organizations that want trusted access for cyber capabilities.

Step 3: Prepare Your Repository Before the scan, make sure your codebase is organized, documented, and accessible. Daybreak works with repository-level access, so a clean structure will produce better results.

Step 4: Review the Threat Model Output When results come back, you will receive an editable threat model. Assign a security lead to review it, prioritize by impact, and begin remediation on the highest-risk attack paths first.

Step 5: Validate Patches Before Deploying. Use Daybreak's patch validation capability to confirm that proposed fixes actually close the vulnerability before pushing to production. This is the step most teams currently skip, and it is often where breaches happen.

Who should apply right now? Enterprise security teams, DevSecOps engineers, companies with active bug bounty programs, and organizations running critical infrastructure. Small development teams should monitor the rollout and apply when broader access opens up.

What This Means for Developers and Students Learning Security

For students studying cybersecurity in 2026, this is genuinely exciting. Here is why.

The emergence of AI-powered vulnerability scanners does not eliminate the need for human security professionals. It changes what those professionals need to know. Understanding how to work with AI security tools, interpret AI-generated threat models, and validate AI-proposed patches is becoming a core skill.

The shift is from reactive to proactive security. Traditional security work often looks like this: ship the software, wait for someone to find a bug, patch it, and ship again. Daybreak and tools like it are designed to make security part of the build process from the start. That is called DevSecOps, and it is where the industry is heading fast.

For CI/CD pipelines specifically, integrating an AI security tool like Daybreak means every code commit can be screened before it reaches production. That is a major shift from the current reality where most small teams do not run thorough security checks until something goes wrong.

Should small teams worry or celebrate? Mostly celebrate with realistic expectations. These tools are powerful, but they are not magic. A Daybreak scan will not replace a skilled penetration tester. It will surface things faster, propose fixes more efficiently, and help teams that cannot afford a full security team stay meaningfully protected.

Avoid When Using AI Security Tools

Adopting a tool like Daybreak without understanding its limits can create a false sense of security. Here are the mistakes worth watching out for.

Mistake 1: Treating AI output as final truth. AI-generated vulnerability reports are starting points, not verdicts. A patch proposed by GPT-5.5 should be reviewed by a human before deployment. Hallucinated fixes are real. We have seen reports in 2026 of AI-generated patches that technically resolved the flagged issue but introduced a new one.

Mistake 2: Skipping the threat model review Daybreak produces editable threat models. Many teams will receive them and file them away. That defeats the purpose. The threat model is the map. If you do not read the map, you do not know where the risks are.

Mistake 3: Only running scans before release. Security scanning works best when it is continuous, not a one-time event before launch. Build the scan into your CI/CD pipeline so every code change is checked automatically.

Mistake 4: Ignoring dependency risk signals Developers tend to focus on their own code and overlook third-party packages. Daybreak's dependency risk analysis exists for a reason. A vulnerability in a popular npm package can affect thousands of applications overnight.

Expert Tips for Getting the Most Out of Daybreak

Tip 1: Use the threat model as a team communication tool. The editable threat model is not just a technical artifact. Share it in sprint planning. Make it part of how the whole team understands risk, not just the security person.

Tip 2: Combine AI findings with manual review on critical paths. For payment systems, authentication flows, and any feature handling personal data, layer a human review on top of AI output. AI catches broad patterns. Humans catch business logic flaws that the model may not fully understand.

Tip 3: Track your remediation rate. Daybreak gives you findings. Track how fast your team closes them. A backlog of open vulnerabilities from an AI tool is almost as risky as not scanning at all, because now you know about the problem and are on the hook.

Tip 4: Stay current on GPT-5.5-CyberAccess updates. OpenAI has said it plans to expand access over time. Check openai.com/daybreak periodically for updates on eligibility, especially if you are running a bug bounty program or a small security team.

Quick Security Checklist:

· Visit openai.com/daybreak and submit a scan request for your main repository.

· Identify who on your team will own the threat model review

· Add a dependency check step to your CI/CD pipeline.

· Schedule a monthly review of open vulnerability findings

· Test one proposed patch in a staging environment before pushing to production

· Share the Daybreak overview with your team so everyone understands what it does and does not do.

People Also Ask About OpenAI Daybreak

What is OpenAI Daybreak?

OpenAI Daybreak is a cybersecurity initiative launched in May 2026. It uses AI models, including GPT-5.5-Cyber, to automatically detect vulnerabilities in software code, test them safely, and suggest validated patches. It is built on OpenAI's Codex Security platform and is designed to integrate into everyday development workflows.

How does GPT-5.5-Cyber differ from regular GPT-5.5?

GPT-5.5-Cyber is a specialized version of GPT-5.5 built specifically for red teaming, penetration testing, and controlled exploit validation. It has fewer standard safety restrictions because it is designed for authorized offensive security work. Regular GPT-5.5 has broader safeguards and is used for general-purpose tasks, including standard code review.

Is OpenAI Daybreak available to the public?

Not fully, as of May 2026. Access is currently controlled through a request-based system. Organizations can submit a request for a vulnerability scan or contact OpenAI's sales team for enterprise integration. Broader public access is expected in future rollouts.

How does Daybreak compare to Anthropic Mythos?

Both are AI-driven defensive security platforms launched within weeks of each other in 2026. Daybreak runs on the GPT-5.5 model family and has confirmed partnerships with companies like Cisco and Palo Alto Networks. Mythos is built on Anthropic's Claude models. Both aim to help defenders find and patch vulnerabilities faster than attackers can exploit them. The core mission is identical; the underlying models and specific integrations differ.

Can AI fully replace human penetration testers?

No. AI tools like Daybreak are powerful at surface-level scanning, pattern recognition, and high-volume analysis. Human penetration testers excel at understanding business logic, chaining complex attack paths, and thinking creatively in ways current AI cannot fully replicate. The most effective security setups in 2026 combine both.

What is the "90-day disclosure policy," and why does it matter now?

The 90-day disclosure policy is a widely followed standard where a researcher who finds a vulnerability gives the affected vendor 90 days to release a patch before publicly disclosing the bug.

In 2026, security researchers are arguing this timeline has broken down because AI tools can compress exploit development from months to minutes. When a patch is released and an AI can reverse-engineer an exploit in under 30 minutes, the 90-day window no longer provides meaningful protection.

Future Implications: Where This Goes Next

Here is the honest reality. Daybreak is the beginning of something larger, not the finished product.

As AI models become better at understanding code semantics, vulnerability detection will move from pattern-matching to genuine reasoning about software behavior. That means fewer false positives, better exploit predictions, and patch suggestions that actually account for how the whole system works, not just the flagged line.

The bigger shift is structural. Security will stop being a specialized function that only certain companies can afford. With AI tools in the pipeline, a two-person startup can potentially run the same quality of vulnerability scanning that a major bank runs today. That democratization of security is genuinely meaningful.

There is also a darker possibility worth naming. The same improvements that make Daybreak better at defense also make offensive AI tools better at attack. The arms race does not stop. But the fact that companies like OpenAI are investing seriously in the defense side is a structural shift that was not present two years ago.

OpenAI Daybreak and What Comes Next

OpenAI Daybreak AI vulnerability detection represents a genuine shift in how the security community can respond to the AI-accelerated threat environment of 2026.

It does not solve everything. Triage fatigue, hallucinated patches, and the ongoing arms race between attackers and defenders are all still real problems.

But the direction is right. Putting AI on the defense side, integrating security into the development loop from day one, and building tiered access models that protect powerful capabilities from misuse, these are thoughtful responses to a genuinely hard problem.

If you are a student, start learning about DevSecOps and AI-assisted security now. It is where the jobs and the interesting problems are going to be for the next decade.

If you are a developer, request a Daybreak scan. See what it finds. Use the threat model. The tools are there.

The attackers are already using AI. The question is whether defenders are keeping up.

AI can now build a working exploit in under 30 minutes, the traditional 90-day disclosure window is no longer enough to keep you safe.

Was this article helpful?

React to this post and see the live totals.

Share this :