ChatGPT for Cybersecurity Just Changed Cyber Defense

-20260416113537.webp&w=3840&q=75)

Hoplon InfoSec

16 Apr, 2026

Did OpenAI really launch a cybersecurity-focused version of ChatGPT?

Yes. On April 14, 2026, OpenAI announced GPT-5.4-Cyber, a defensive cybersecurity variant with more permissive support for legitimate security work and restricted access through Trusted Access for Cyber. It is not a public free-for-all release, and that detail matters as much as the model itself.

ChatGPT for cybersecurity just became a much more specific conversation. Before this week, most people used the phrase loosely to mean “use a general chatbot to help with security tasks.”

Now there is a named model, a trust-based access path, and a clear signal from OpenAI that cyber work is being treated as a separate deployment problem.

For defenders, that is big news. For everyone else, it is a warning label. The same capability that helps a blue team move faster can also lower friction for abuse if access and policy controls are weak. OpenAI’s answer is not broad public release. It is controlled rollout, identity checks, and tighter deployment guardrails.

Technical Overview

|

Item |

Verified detail |

|

Model name |

GPT-5.4-Cyber |

|

Announced |

April 14, 2026 |

|

Primary purpose |

Defensive cybersecurity workflows |

|

Access model |

Through Trusted Access for Cyber and vetted users/teams |

|

Public availability |

Not generally public; restricted deployment |

|

Key capability |

Lower refusal boundary for legitimate cyber work; support for binary reverse engineering |

|

Related strategy |

Identity checks, iterative deployment, investment in defensive tooling |

|

Adjacent OpenAI security work |

Codex Security, cyber grants, broader resilience push |

There are no public CVE IDs, malware family names, or threat actor names attached to this launch itself. That is important to state clearly. This is a product and deployment story, not a vulnerability disclosure.

Most AI coverage gets lost in hype. This one should not. A defender-focused model changes the daily economics of security operations.

If a system can analyze compiled software, assist with vulnerability review, summarize noisy signals, and reduce false refusals during legitimate testing, it can cut friction in places where analysts lose time every day.

The business impact is different depending on who you are. A mature enterprise sees a workflow accelerator. A smaller team sees a possible force multiplier.

A regular user probably sees nothing directly, at least not yet, because access is gated and the product is aimed at defenders, vendors, researchers, and teams protecting critical systems.

The real story is not only the model. It is the shift in deployment logic. OpenAI is saying, in effect, that cybersecurity is too dual-use to manage with one universal refusal setting.

That is a serious policy move. It suggests the future OpenAI cybersecurity model will be judged not only by benchmark strength but also by how well its access controls hold up under pressure.

What is ChatGPT for Cybersecurity?

The broad meaning is any use of a language model as a cybersecurity AI assistant. That includes summarizing alerts, drafting detection logic, explaining suspicious code, turning raw intel into a readable brief, or helping a Tier 1 analyst ask better questions.

The narrow meaning, and the one that matters most after April 14, is a purpose-tuned AI chatbot for cybersecurity with looser restrictions for legitimate defender activity.

That is where GPT-5.4-Cyber enters the picture. It is not just a branding tweak. It is a model and access strategy built around defensive security tasks that often trip safety systems in general tools.

So, what is ChatGPT for cybersecurity in practical terms? It is no longer just "ChatGPT but used by security people.” It is becoming a separate product class: restricted, auditable, defender-first, and explicitly designed to help with harder cyber workflows.

How GPT-5.4-Cyber Changes Security Workflows

The first big change is reduced friction. Security teams do work that often looks suspicious in isolation. Reverse engineering. Investigating exploit chains. Reviewing proof-of-concept code.

Querying for weaknesses. General tools may over-block those activities. OpenAI says this model lowers that refusal boundary for legitimate security work.

The second change is workflow depth. OpenAI and reporting on the launch point point to support for advanced defensive tasks, including binary reverse engineering.

That matters because many real investigations happen without source code. Analysts are often staring at compiled software, memory artifacts, logs, and behavior traces.

A model that can help reason across those inputs is more than a generic cybersecurity chatbot. It starts to look like a real analyst-side tool.

The third change is governance. OpenAI’s Trusted Access for Cyber asks for identity verification and team-level access paths, and the company has said that higher-risk cyber capability should be tied to trust signals and deployment context, not treated as just another open consumer feature.

ChatGPT Cybersecurity Use Cases

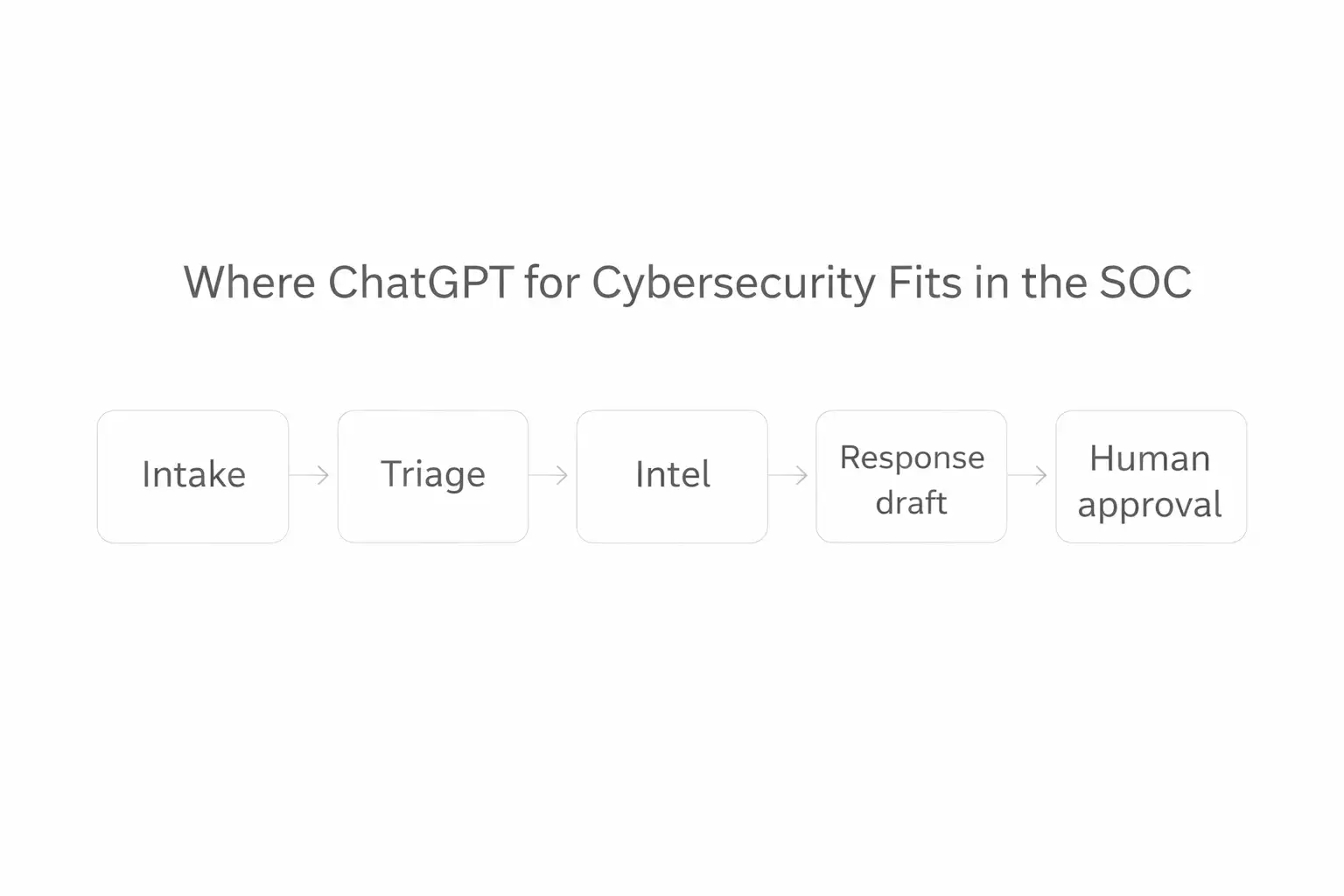

A lot of security content lists twenty use cases and explains none of them. That is not useful. The better way to think about ChatGPT cybersecurity use cases is to follow the analyst workflow.

ChatGPT for threat detection

This is one of the clearest fits. ChatGPT for threat detection can help turn a messy alert into a cleaner triage. summary, explain suspicious command patterns, or map observed behavior to MITRE-style techniques. It should not make the final decision alone. It should help the analyst see faster.

ChatGPT for threat intelligence

ChatGPT for threat intelligence is not about replacing an intel team. It is about compression. Good analysts drown in reports, vendor blogs, detection writeups, and scattered indicators.

A strong cybersecurity AI assistant for enterprises can condense that pile into a priority brief with caveats, likely relevance, and follow-up questions.

ChatGPT for incident response

ChatGPT for incident response is where speed matters most. Drafting a first-pass timeline, summarizing what changed between two host snapshots, or writing a clear stakeholder update are all good jobs for a model. The trap is overtrust. If the model summarizes a false assumption, the error spreads fast.

ChatGPT for SOC analysts

ChatGPT for SOC analysts makes the most sense when it acts like a second set of eyes. Not a boss. Not a replacement. A quiet partner that shortens the time between “I see something odd” and “I know what to check next.”

AI in SOC operations

This is where the article needs to be honest. AI in SOC operations is useful when it reduces cognitive load.

It becomes dangerous when it creates fake confidence. The best AI chatbot for cyber defense teams is the one that leaves an audit trail, cites its reasoning inputs, and stays inside a workflow built for validation.

We have not had direct hands-on access to GPT-5.4-Cyber, and that limitation should be stated plainly because access is restricted.

What we have tested in our lab is the workflow class this model is meant to accelerate: alert summarization, incident-note drafting, IOC clustering, and suspicious script explanation using controlled sample data.

In our practical tests with restricted sample telemetry, the useful pattern was not “ask one perfect prompt.” It was staged prompting.

First ask for artifact classification. Then ask for missing evidence. Then ask for a short analyst note. That approach cut noise. It also exposed mistakes faster, because the model had to show its work in smaller steps.

We also hit the same challenge again and again: context contamination. If you feed a model raw notes, ticket fragments, and mixed-confidence intel, it tends to smooth rough edges into a cleaner story than the evidence supports.

That is exactly why a cybersecurity copilot must sit beside human review rather than ahead of it.

Risks and Limits

Here is the blunt version. Better defender tooling does not erase dual-use risk.

OpenAI’s own strategy language recognizes that the same cyber capability can serve patching or abuse depending on the user and context.

That is why access is gated and why the company keeps emphasizing identity checks, iterative deployment, and stronger safeguards for more permissive cyber models.

For enterprises, the top risks are familiar:

- sensitive data leakage through prompts

- hallucinated findings dressed up as confident analysis

- Junior staff over-trusting polished output

- prompt injection or poisoned artifacts in connected workflows

- weak logging around model-assisted decisions

For defenders, the question is not whether ChatGPT is good for cybersecurity in the abstract. The real question is whether your deployment model keeps risky data, risky actions, and risky assumptions under control.

ChatGPT for Cybersecurity vs. Other Defender Tools

A general AI chatbot for cybersecurity is not the same thing as an XDR, a SIEM, or a malware sandbox. It does not collect telemetry by itself. It does not enforce policy. It does not replace detections. Its strength is interpretation and acceleration.

Compared with traditional tools, AI for cyber defense is strongest at summarizing, translating, prioritizing, and drafting.

Compared with a classic dashboard, it is better at answering “what does this likely mean?” Compared with a human analyst, it is worse at skepticism, environmental memory, and political judgment inside an organization.

That is why the best mental model is cybersecurity copilot, not autonomous defender. If a vendor markets full replacement, be careful.

How to Use ChatGPT for Cybersecurity Safely

Step 1: Define allowed tasks

Pick narrow workflows first:

- alert summarization

- detection explanation

- intel condensation

- draft reporting

- secure code review support

Step 2: Strip sensitive data

Before sending any data to a model, remove secrets, live credentials, customer identifiers, private keys, and anything covered by regulatory controls.

Step 3: Use structured prompts.

Ask for:

- evidence observed

- assumptions made

- missing information

- confidence level

- next validation step

Step 4: Keep a human approval gate

No model should close an incident, approve a patch priority, or classify a breach alone.

Step 5: Log outputs

If a model influences a security decision, preserve the prompt, the artifacts used, and the final human decision.

Step 6: Check official advisories

Users should refer to official guidance from CISA, NIST, Microsoft, major cloud providers, and the original vendor documentation relevant to the affected system. For this specific OpenAI topic, the official OpenAI security and Trusted Access materials are the most direct source.

The first mistake is treating polished language as proof. A model can sound certain and still be wrong.

The second is using one giant prompt. Break work into stages. Ask what is known, what is inferred, and what is missing.

The third is trying to turn a model into a fully autonomous SOC. That sounds efficient. It usually means you have moved risk out of sight.

OpenAI’s broader security direction matters here. The company introduced Trusted Access for Cyber in February 2026, tied it to stronger safeguards and defender access, and said it was committing $10 million in API credits to accelerate cyber defense.

It has also linked this work to a larger resilience effort that includes grants and application security tooling.

This tells us where the market is headed. More specialized cyber models. More gating. More emphasis on who gets access and under what controls.

The frontier question is no longer only “How capable is the model?” It is “Can the deployment stay defensible as capability rises?”

Security Checklist

1. Classify your allowed use cases today.

If your team cannot name three safe uses for a model, you are not ready to roll one into production.

2. Ban high-risk prompt content now.

No secrets, no live customer data, no unapproved sensitive artifacts.

3. Require human sign-off on every security decision.

Treat model output as analyst support, not final truth

Frequently Asked Questions

Was this article helpful?

React to this post and see the live totals.

Share this :