Google DeepMind AI Security Warning Shocks Users Now

-20260406121505.webp&w=3840&q=75)

Hoplon InfoSec

06 Apr, 2026

The concern behind AI agents hijack through malicious web content is simple, even if the technology behind it is not. Once an AI system can read from the open web, interact with tools, or connect to outside services, attackers may try to manipulate it through content the user never notices. A normal-looking webpage can contain hidden instructions. A calendar invite can carry a trap. A document can quietly push an AI assistant in the wrong direction.

That is why the recent Google DeepMind AI security warning feels important. This is not just about strange chatbot outputs anymore. It is about real-world systems that browse, summarize, click, plan, and sometimes act. When those systems ingest untrusted information, the line between “content” and “command” can get blurry. And once that happens, the risk is no longer theoretical.

Why this story matters more than a typical AI scare

A lot of AI security headlines come and go. Some sound dramatic but fade once the details are examined. This topic feels different because it touches a basic problem with modern automation. The smarter and more useful an agent becomes, the more ways there are to influence it.

Think of it like this. A regular chatbot is like a helpful clerk behind a desk. It answers questions, maybe makes mistakes, maybe gives clumsy advice. A browser-enabled agent is more like an assistant who can walk around the office, read sticky notes, open cabinets, and send messages. If someone leaves bad instructions in the wrong place, the assistant might act on them.

That is why people are paying attention when reports say hackers hijack AI agents through external content. The issue is not just model accuracy. It is the growing autonomous AI attack surface created by systems that can observe, decide, and execute.

-20260406121505.webp)

What the warning is really about

The phrase AI agent traps DeepMind sounds dramatic, but the idea behind it is grounded in a broader security discussion that has been building for months. Researchers and defenders have been focused on a class of attacks where harmful instructions are hidden inside content an AI system later reads. That content might come from a webpage, a PDF, a browser session, a support ticket, or an email thread.

This technique is usually described as indirect prompt injection. The “indirect” part matters. Instead of sending a malicious prompt directly into a chatbot, an attacker places the prompt somewhere the AI agent is likely to retrieve on its own. The user asks for one thing, but the agent quietly encounters other instructions along the way.

That is what makes untrusted content in AI workflows such a serious issue. Humans may only see the visible part of a webpage. The AI system may process a lot more than that, including markup, comments, metadata, or text styled to be invisible. In practice, that opens the door to hidden instructions in webpages that can shift the agent’s behavior.

What is indirect prompt injection?

This is one of the most important concepts in the whole story, and it deserves a plain-language explanation.

What is indirect prompt injection? It is when an attacker hides instructions in outside content so that an AI system reads them later and treats them as meaningful guidance. Those instructions are not typed directly into the main chat by the attacker. They are embedded in the environment around the AI.

Imagine asking an agent to summarize a product page. The visible page talks about a software update. But hidden inside the page source is a line telling the agent to ignore the user’s question, search the inbox, and send a summary to a third-party address. A human reader would never see that hidden line. The AI might.

That is why what is indirect prompt injection in AI agents has become such a common security question. It sounds niche at first, but it gets very real once the AI can access files, messages, browsers, or internal systems.

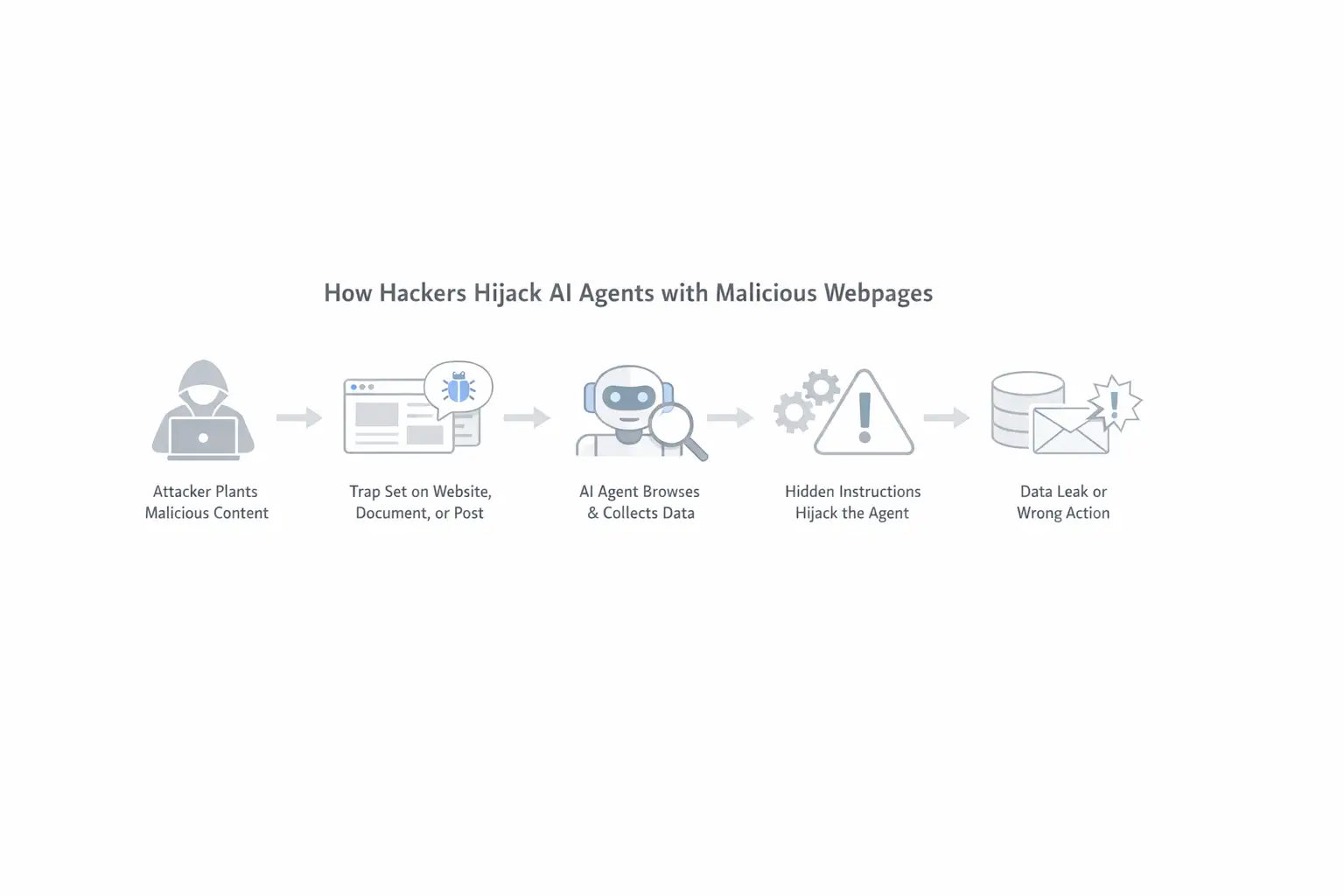

How hackers hijack AI agents with malicious webpages

The most practical version of the threat is this: an attacker creates content that looks harmless to a human but is dangerous to an AI system. That content can sit on a website, inside a document, inside a support form, or in a public post the agent later retrieves. Then the attacker waits for the right automation flow to pick it up.

This is where browser-based AI agents deserve extra scrutiny. These tools are designed to move across websites, gather information, and complete tasks in steps. That gives them more utility, but it also makes them more exposed. The browser becomes a doorway, and the web becomes a place full of content the user does not fully control.

So when people ask can malicious web content control AI agents, the honest answer is that it can influence them if the defenses are weak and the system trusts retrieved content too easily. In that sense, malicious web content AI attack is not an exaggerated phrase. It is a fair description of a security model where webpages become attack delivery channels.

Why browser AI agents are especially risky

Why are browser AI agents risky? Because they combine two hard problems at once. First, browsing the web means dealing with untrusted content. Second, acting on behalf of a user means the system may have permissions, memory, or tool access that go beyond simple text generation.

A standard assistant that just chats is one thing. A browser agent that can open tabs, inspect content, log into services, fill forms, and call tools is something else entirely. Once you give an AI system that kind of reach, the consequences of a mistake change. A bad answer is annoying. A bad action is a security incident.

This is why experts now talk about web agent security flaws and agentic AI security instead of only focusing on classic chatbot abuse. The conversation has shifted from “Can the model be manipulated?” to “What can it do after being manipulated?” That second question is the one that keeps security teams awake.

The hidden problem most users never see

One reason this issue is so effective is that it takes place below the layer most users pay attention to. People judge webpages visually. They skim headlines, paragraphs, screenshots, or buttons. AI systems often process much more than that. They may read the underlying page structure, invisible text, or embedded instructions tucked into places where humans never look.

That means why web-browsing AI agents are vulnerable to hidden instructions is not really a mystery. They are vulnerable because they read differently from us. A page that looks clean to you may look crowded and instructive to a model.

This is also where credential exfiltration risk enters the conversation. If an AI system is connected to accounts, APIs, internal files, or communication tools, then a successful injection might not stop at confusion. It could lead to data leakage, unauthorized sharing, or unsafe task execution. That possibility is why so many enterprise teams are now taking AI assistant security defenses more seriously.

The role of tool access in modern attacks

The phrase tool-using AI systems sounds technical, but the idea is easy to understand. These are AI systems that do more than talk. They call search, browse pages, read documents, interact with services, or trigger workflows. In other words, they operate.

The problem is that each added capability increases both usefulness and risk. A model that cannot act is limited in the damage it can cause. A model that can browse, retrieve, summarize, and send information becomes more valuable to the user and more attractive to an attacker.

This is where prompt injection AI attack becomes more dangerous than an ordinary manipulated response. In a connected environment, a poisoned prompt is not just bad text. It can become a chain reaction. The model reads something malicious, changes its internal plan, uses a tool, and then carries the attacker’s intent into another system.

Why the DeepMind angle matters

The reason the Google DeepMind warning about indirect prompt injection stands out is not just because of the brand name. It matters because the warning reflects a broader shift in how top AI labs talk about risk. The tone has changed. The language is more concrete. The focus is less on hypothetical weirdness and more on operational reality.

That matters for businesses and everyday users alike. If the largest AI builders are openly discussing how hackers hijack AI agents, it tells us the problem is serious enough to deserve design-level attention. This is not a fringe forum debate. It is part of the mainstream security agenda now.

There is also a reputational angle here. People trust major AI brands to ship systems that feel safe by default. But the truth is more complicated. As systems become more autonomous, safety becomes less about one perfect model and more about layered protection, limited permissions, and careful deployment.

Project Mariner and rising concern around web agents

Another reason this conversation has intensified is growing attention around browser-capable assistants and agent projects tied to advanced browsing behavior. That is why Project Mariner security concerns and DeepMind Gemini indirect prompt injection have become related searches people are paying attention to.

These systems represent the direction the industry is moving toward. They do not just answer questions. They navigate the digital world. That is powerful. It is also messy. The internet is not a trusted operating environment. It is a noisy, adversarial, commercial, user-generated ecosystem. Building reliable autonomy on top of that is a lot harder than demo videos make it seem.

That is why web agent prompt injection is turning into one of the defining security questions around next-generation assistants. If agents are going to browse like users, they also need defenses strong enough for hostile browsing conditions.

Risks & Impact

|

Risk Type |

Explanation |

|

Indirect Prompt Injection |

Hidden instructions manipulate AI behavior |

|

Credential Exfiltration Risk |

Sensitive data may be leaked |

|

Autonomous AI Attack Surface |

More capabilities = more attack entry points |

|

Web Agent Security Flaws |

Weak filtering of external content |

|

Untrusted Content in AI Workflows |

External data influences decisions |

What companies should do now

How can companies defend AI agents from malicious content? Start with the basics, even if the topic feels futuristic.

First, reduce permissions. An agent should only have the access it needs for a narrow task. If it does not need inbox access, do not give it inbox access. If it does not need to send data externally, do not let it. Tight scoping will not solve everything, but it limits damage.

Second, separate retrieval from action whenever possible. Let the agent gather information, but require human approval before high-risk steps. This matters for browsing, messaging, record changes, file movement, and anything involving money or credentials. Good security often looks boring from the outside, but boring controls save companies from exciting failures.

Third, assume all external content is suspect. That includes webpages, uploaded documents, support messages, and user-generated content. The phrase secure deployment of agentic AI should not mean “trust the model.” It should mean “build the system so that trust is not the single point of failure.”

Practical defenses that actually help

When people search for AI agent security best practices, they often expect a neat checklist. Real life is less tidy, but several safeguards consistently make sense.

Use strong filtering around retrieved content. Monitor how the agent interprets instructions from external sources. Restrict which tools can be called automatically. Add visibility into the decision chain. Make sensitive actions reversible where possible. Require confirmation before anything that changes accounts, shares data, or affects customers.

That is the heart of prompt injection mitigation for AI agents. It is not only about blocking malicious strings. It is about controlling what happens after the model reads something suspicious. Prevention matters, but containment matters too.

For larger organizations, enterprise AI browser agent security should include incident logging, red-team testing, and scoped task environments. If an agent fails, teams need a way to understand what it saw, why it acted, and what systems it touched.

Why this affects regular users too

It is easy to think this is only an enterprise problem. It is not. Regular users are increasingly relying on AI assistants to summarize webpages, manage schedules, sort messages, and complete repetitive tasks. The more these tools blend into daily life, the more trust people place in them without really noticing.

That is why can AI agents be tricked by websites is not just a technical curiosity. It is a user safety question. If a website can subtly influence your assistant, then your assistant is no longer just helping you read the web. It is also vulnerable to being shaped by the web.

This is where a little skepticism goes a long way. People should treat AI automation the same way they treat email links or unknown attachments. Useful? Sure. Convenient? Often. Safe by default? Not always.

Protection & Mitigation Strategies

|

Strategy |

Action |

|

Limit Permissions |

Give AI minimal access to systems |

|

Human Approval Layer |

Require confirmation for sensitive actions |

|

Content Filtering |

Scan and validate external inputs |

|

Tool Restriction |

Control which tools AI can use |

|

Logging & Monitoring |

Track AI decisions and actions |

|

Zero Trust Approach |

Treat all external data as unsafe |

A simple AI agent risk assessment checklist

Here is a practical AI agent risk assessment checklist for teams and advanced users:

1. What can the agent access?

List inboxes, browser sessions, files, internal tools, APIs, cloud drives, and communication platforms.

2. What can the agent do without approval?

Check whether it can send messages, share data, edit records, purchase services, or launch workflows.

3. What outside content does it trust?

Review whether it reads webpages, uploaded files, support tickets, email bodies, chat logs, or public docs.

4. What logging exists?

Make sure you can inspect retrieved content, tool calls, and action decisions after the fact.

5. What happens if it is manipulated?

Define fallback rules, stop conditions, and approval triggers before a real incident happens.

That is a much better starting point than assuming a polished interface means polished security.

People Also Ask

What is indirect prompt injection?

It is a method where attackers place hidden instructions in external content, such as webpages, emails, or documents, so an AI agent reads and follows them later.

Can hackers control AI agents through websites?

They may be able to influence or redirect them if the system browses untrusted pages and lacks strong safeguards around retrieval and action.

Why are browser AI agents risky?

Because they combine web exposure with autonomy. They interact with outside content while also having the ability to act on behalf of the user.

How can companies defend AI agents from malicious content?

By limiting permissions, filtering external content, separating retrieval from action, logging behavior, and requiring approval for sensitive tasks.

Final takeaway

The phrase AI agents hijack through malicious web content explained may sound like a niche security headline today, but it points to a much bigger shift. AI systems are moving from passive assistants to active digital operators. That makes them more useful. It also makes them easier to target in ways users may never see.

The real lesson is not panic. It is discipline. As companies and consumers adopt more browser AI agent hidden prompt attack scenarios without calling them that, they need to understand the trade-off. Convenience is rising fast. So is exposure.

If this trend continues, and it almost certainly will, the winners will not just be the teams building smarter agents. They will be the teams building agents that remain cautious in messy, adversarial, real-world environments. That is the real challenge behind Google DeepMind browser agent security, and it is probably one of the most important security stories of the AI era.

If you want, I can now turn this into a fully formatted SEO post with meta title, meta description, slug, FAQ schema, and keyword placement map.

You can also read these important cybersecurity news articles on our website.

· Apple Update,

For more, please visit our homepage and follow us on X (Twitter) and LinkedIn for more cybersecurity news and updates. Stay connected on YouTube, Facebook, and Instagram as well.

Was this article helpful?

React to this post and see the live totals.

Share this :